Comments (5)

像 openai RESTful API 一样?

Yes, this kind of regular HTTP client would be very convenient for use and integration. Also, as a suggestion, the preprocess and postprocess models can be implemented with the Triton framework ensemble mode, so that all steps can be completed with just one request to the interface. Just like the GPT example in the FasterTransformer project.

https://github.com/triton-inference-server/fastertransformer_backend/tree/main/all_models/gpt

from lmdeploy.

like openai RESTful APIs?

from lmdeploy.

We are working on it now.

from lmdeploy.

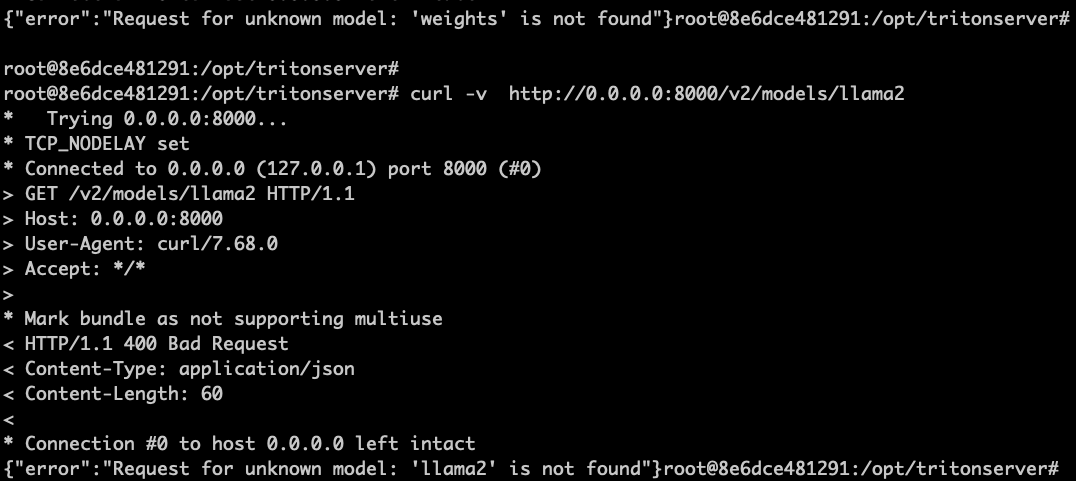

Hi I opened the --allow-http=1 option before running the service_docker_up.sh, but "curl -v http://0.0.0.0:8000/v2/models/llama2" always return "unknown model"(grpc interface works well), does it because restful api is not supported now? thank you.

from lmdeploy.

Hi I opened the --allow-http=1 option before running the service_docker_up.sh, but "curl -v http://0.0.0.0:8000/v2/models/llama2" always return "unknown model"(grpc interface works well), does it because restful api is not supported now? thank you.

The restful APIs were added. But they are not through triton server. For more details please refer to https://github.com/InternLM/lmdeploy/blob/main/docs/en/restful_api.md

from lmdeploy.

Related Issues (20)

- [Feature] specify gpus in pipeline

- [Feature] Layer Wise Calibration and Quantization of Models (To quantize model on Low VRAM GPU) HOT 4

- 使用KV cache(int8或int4)量化internvl-v1.5后,显存反而增加了 HOT 7

- [Feature] Support for CogVLM2 HOT 1

- [Bug] qwen1.5-14b-chat使用turbomind进行推理,会出现输出重复的情况 HOT 9

- lmdeploy搭建的服务,是否支持通过传输stop_words的方式来控制模型输出 HOT 4

- Are there any plans to support CUDA 11.7? HOT 4

- [Bug] got error when pip install. docker img works though, python ver3.11 HOT 9

- [Bug] It seems the memory of internlm2 is bad when input prompt is longtext. HOT 8

- engine_config = TurbomindEngineConfig(tp=2, quant_policy=0, cache_max_entry_count=0.2, session_len=4096)# quant_policy=8, self.pipe = pipeline("InternVL-Chat-V1-5", backend_config=engine_config) 其他配置参数不变,改变quant_policy=8,0,4 ,显存占用和推理速度没有任何改变是为什么呢?

- [Feature]- Support for the microsoft/Phi-3-vision-128k-instruct Vision Model HOT 1

- [Bug] 部署InternLM-XComposer2服务的时候,请求报错的时候;整个卡住,不返回500,并且其他请求都进不去 HOT 2

- LMDeploy-0.4.1运行qwen1.5 110B,推理长时间无结果 HOT 2

- [Bug] Two different tokenizers are used in benchmarks HOT 3

- Wonderful work!,请问有关于VLM类模型api_server的性能测试内容吗? HOT 2

- [Bug] awq量化4bit 设置 --tp 4 报错 HOT 1

- [Feature] A series of various optimization points HOT 6

- [Feature] 跑vlm多卡时,显存不太均匀,0号卡特别高,容易oom HOT 2

- [QA] api传非https图片调用模型 HOT 5

- 换用 LLM 基座的 LLaVA 模型适配 HOT 1

Recommend Projects

-

React

React

A declarative, efficient, and flexible JavaScript library for building user interfaces.

-

Vue.js

🖖 Vue.js is a progressive, incrementally-adoptable JavaScript framework for building UI on the web.

-

Typescript

Typescript

TypeScript is a superset of JavaScript that compiles to clean JavaScript output.

-

TensorFlow

An Open Source Machine Learning Framework for Everyone

-

Django

The Web framework for perfectionists with deadlines.

-

Laravel

A PHP framework for web artisans

-

D3

Bring data to life with SVG, Canvas and HTML. 📊📈🎉

-

Recommend Topics

-

javascript

JavaScript (JS) is a lightweight interpreted programming language with first-class functions.

-

web

Some thing interesting about web. New door for the world.

-

server

A server is a program made to process requests and deliver data to clients.

-

Machine learning

Machine learning is a way of modeling and interpreting data that allows a piece of software to respond intelligently.

-

Visualization

Some thing interesting about visualization, use data art

-

Game

Some thing interesting about game, make everyone happy.

Recommend Org

-

Facebook

We are working to build community through open source technology. NB: members must have two-factor auth.

-

Microsoft

Open source projects and samples from Microsoft.

-

Google

Google ❤️ Open Source for everyone.

-

Alibaba

Alibaba Open Source for everyone

-

D3

Data-Driven Documents codes.

-

Tencent

China tencent open source team.

from lmdeploy.