Comments (13)

- When training with default model given by this repo, I encounter

NAN.

[Solve]: I initialize the upsample layer weight to be 1.0, bias to be 0.0. - When training with multi-gpus, I encounter

NANagain.

[Solve]: Use one gpu, batch_size = 4

I'm not sure how to avoid nan.

It works to train with inited upsample layer and on one gpu.

from waveglow.

I also got negative loss and 'nan' during training.

I moved the waveglow into my training framework and trained it with DataParallel from scratch. It seems that the nll recovered from nan and then became nan again.

Besides, the infered samples even reach 1e17.

I used hparams:

sigma = 1.0,

n_flows = 12,

n_group = 8,

n_early_every = 4,

n_early_size = 2,

wn = Config(n_layers=8, n_channels=256, kernel_size=3)

from waveglow.

@chaiyujin so, what's your training framework? retrain from the provided model, did it work? I am lost your words

from waveglow.

@benlaitang Sorry about my english. I have my own training framework. I trained glow from scratch. Never test fine-tuning from provided model.

from waveglow.

I got exactly the same problem.

from waveglow.

@wenyong-h did you solve the problem?

from waveglow.

No, I'm training from scratch now.

from waveglow.

- Yes, the loss should be negative. We trained the model for 580 iterations with batch size 24. 22k iters with batch size 3 is probably not enough to produce intelligible speech.

- The second model is shared for inference only, not resuming training.

from waveglow.

@rafaelvalle thanks a lot. I will try from scratch again.

from waveglow.

Closing. Please re-open if necessary.

from waveglow.

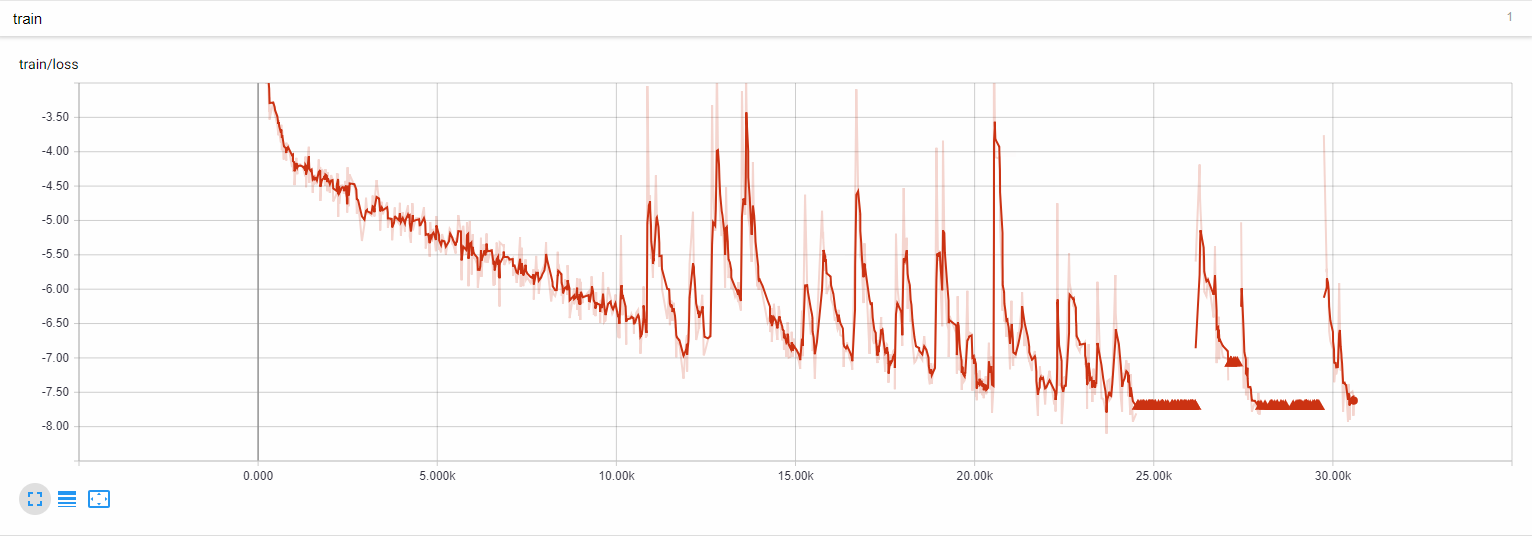

@chaiyujin Did you solve the issue of nan loss? Because I encounter similar issue. My training curve is something like these:

from waveglow.

@rafaelvalle is there anyway to fine-tune or re-traing or resume training of the model ?

from waveglow.

@chaiyujin thanks for your solution. I also met the NAN problem. When I set the one GPU with batch size 1, the training loss is fine. But when I set 8 GPU by using torch.nn.parallel.data_parallel with batch size 8, I got NAN loss after a few thousand steps. I adjust the learning rate from 1e-4 to 1e-5, then solved the NAN problem.

from waveglow.

Related Issues (20)

- GPU required or CPU compatible? HOT 1

- tts_waveglow_268m from_pretrained KeyError

- Waveglow Pretrained Model shall i use for male voice to do transfer learning? Need guidance

- I want to run a trained "yolov5s.pt" in a local pc for a usage of a opencv application .How can I do that in simples as a newbies to pytorch

- Is it possible to split denoiser module?

- Multispeaker trained model inferencing different voices HOT 1

- Can not load model HOT 1

- Converting audio samples to mel then back to audio just generates noise. HOT 3

- Convert log Mel bank energy to audio by your model

- Training different 'n_mel_channels' models HOT 2

- An important issue on multispeaker inference

- Continue Training from a checkpoint saved in checkpoints folder HOT 1

- spectrogram (image)-to-to wav

- how to make a list of the file names to use for training/testing? HOT 1

- why "audio = audio.astype('int16')" is uesd ? HOT 6

- Need help warm start model HOT 1

- ERROR: No matching distribution found for torch==1.0 HOT 2

- Can't install matplotlib

- WaveGlow: a Flow-based Generative Network for Speech Synthesis

- Error : waveglow and pad_center() takes 1 positional argument but 2 were given on Denoiser

Recommend Projects

-

React

React

A declarative, efficient, and flexible JavaScript library for building user interfaces.

-

Vue.js

🖖 Vue.js is a progressive, incrementally-adoptable JavaScript framework for building UI on the web.

-

Typescript

Typescript

TypeScript is a superset of JavaScript that compiles to clean JavaScript output.

-

TensorFlow

An Open Source Machine Learning Framework for Everyone

-

Django

The Web framework for perfectionists with deadlines.

-

Laravel

A PHP framework for web artisans

-

D3

Bring data to life with SVG, Canvas and HTML. 📊📈🎉

-

Recommend Topics

-

javascript

JavaScript (JS) is a lightweight interpreted programming language with first-class functions.

-

web

Some thing interesting about web. New door for the world.

-

server

A server is a program made to process requests and deliver data to clients.

-

Machine learning

Machine learning is a way of modeling and interpreting data that allows a piece of software to respond intelligently.

-

Visualization

Some thing interesting about visualization, use data art

-

Game

Some thing interesting about game, make everyone happy.

Recommend Org

-

Facebook

We are working to build community through open source technology. NB: members must have two-factor auth.

-

Microsoft

Open source projects and samples from Microsoft.

-

Google

Google ❤️ Open Source for everyone.

-

Alibaba

Alibaba Open Source for everyone

-

D3

Data-Driven Documents codes.

-

Tencent

China tencent open source team.

from waveglow.