apache / iotdb Goto Github PK

View Code? Open in Web Editor NEWApache IoTDB

Home Page: https://iotdb.apache.org/

License: Apache License 2.0

Apache IoTDB

Home Page: https://iotdb.apache.org/

License: Apache License 2.0

// 当 columnPattern 为"root.*"的时候,可以获得所有的列名

// 当columnPattern为某一列(如"root.sgcc.wf03.wt01.temperature")的时候,可以获得

该列的数据类型

public ResultSet getColumns(String catalog, String schemaPattern, String

columnPattern, String deltaObjectPattern)

请问一下咱们这个版本还有维护这个API吗?我这边获取的resultset为null,能帮忙看看吗,谢谢了

This may occur in 0.9.0 ~ 0.9.3 version.

将IoTDB的存储模式改为HDFS后成功运行,我写了一个不断插入数据的脚本,在脚本运行时手动随机查询数据,频繁报500的错误。有两种提示信息。

后台日志信息如下:

2020-07-16 14:52:32,783 [pool-7-IoTDB-Query-ServerServiceImpl-thread-10] ERROR org.apache.iotdb.db.query.dataset.RawQueryDataSetWithoutValueFilter$ReadTask:107 - Something gets wrong:

java.nio.BufferUnderflowException: null

at java.nio.Buffer.nextGetIndex(Buffer.java:506)

at java.nio.HeapByteBuffer.getInt(HeapByteBuffer.java:364)

at org.apache.iotdb.tsfile.file.header.ChunkHeader.deserializeFrom(ChunkHeader.java:118)

at org.apache.iotdb.tsfile.read.TsFileSequenceReader.readChunkHeader(TsFileSequenceReader.java:659)

at org.apache.iotdb.tsfile.read.TsFileSequenceReader.readMemChunk(TsFileSequenceReader.java:681)

at org.apache.iotdb.db.engine.cache.ChunkCache.get(ChunkCache.java:122)

at org.apache.iotdb.db.query.reader.chunk.DiskChunkLoader.loadChunk(DiskChunkLoader.java:46)

at org.apache.iotdb.db.utils.FileLoaderUtils.loadPageReaderList(FileLoaderUtils.java:150)

at org.apache.iotdb.db.query.reader.series.SeriesReader.unpackOneChunkMetaData(SeriesReader.java:377)

at org.apache.iotdb.db.query.reader.series.SeriesReader.unpackAllOverlappedChunkMetadataToCachedPageReaders(SeriesReader.java:368)

at org.apache.iotdb.db.query.reader.series.SeriesReader.hasNextPage(SeriesReader.java:317)

at org.apache.iotdb.db.query.reader.series.SeriesRawDataBatchReader.readPageData(SeriesRawDataBatchReader.java:151)

at org.apache.iotdb.db.query.reader.series.SeriesRawDataBatchReader.readChunkData(SeriesRawDataBatchReader.java:143)

at org.apache.iotdb.db.query.reader.series.SeriesRawDataBatchReader.hasNextBatch(SeriesRawDataBatchReader.java:98)

at org.apache.iotdb.db.query.dataset.RawQueryDataSetWithoutValueFilter$ReadTask.runMayThrow(RawQueryDataSetWithoutValueFilter.java:67)

at org.apache.iotdb.db.concurrent.WrappedRunnable.run(WrappedRunnable.java:32)

at java.util.concurrent.Executors$RunnableAdapter.call(Executors.java:511)

at java.util.concurrent.FutureTask.run(FutureTask.java:266)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:748)

时间戳越靠前报错几率越大,也就是说新插入的时间戳出现这个错误的几率不大

将Windows下生成的incubator-iotdb\distribution\target\apache-iotdb-0.10.0-SNAPSHOT-incubating-bin拷贝到Linux,使用chmod命令给予start-server.sh权限后,运行start-server.sh可能会报出bad interpreter或者$'\r'这两类错误,如下图:

解决方案:

一、更改sbin目录下start-server.sh的脚本格式为Unix

二、同样的操作,更改conf目录下iotdb-env.sh的脚本格式为Unix

再次运行start-server.sh可以发现能够正常运行

参考:解决方案参考网页

Describe the bug

0.10.0版本中 样例数据测试

select count(status), max_value(temperature) from root.ln.wf01.wt01 group by ([2017-11-01T00:00:00, 2017-11-07T23:00:00),1d) limit 5 offset 3

和

select count(status), max_value(temperature) from root.ln.wf01.wt01 group by ([2017-11-01T00:00:00, 2017-11-07T23:00:00),1d)

结果一致

Screenshots

实际结果

Desktop (please complete the following information):

Additional context

Add any other context about the problem here.

Can not establish connection with jdbc:iotdb://127.0.0.1:6667/. because Required field 'statusCode' was not present! Struct: TS_Status(statusCode:null)

ALTER timeseries root.turbine1.d1.s1 UPSERT ALIAS=p00001 TAGS(tag2=newV2, tag3=v3) ATTRIBUTES(attr3=v3, attr4=v4),

目前注册timeseries时候可以添加别名,但没法修改,可以保证别名在该设备下命名唯一的情况下upsert

使用IoTDB的 0.10.0-SNAPSHOT版本.

IoTDB无数据时,访问IP:8181可以正常显示监控网页,当写入数据后,再次访问,网页报出500异常.

java.lang.NullPointerException

at org.apache.iotdb.db.metrics.ui.MetricsPage.sqlRow(MetricsPage.java:120)

at org.apache.iotdb.db.metrics.ui.MetricsPage.render(MetricsPage.java:83)

at org.apache.iotdb.db.metrics.server.QueryServlet.doGet(QueryServlet.java:42)

查看MetricsPage.java的第120行:

+ "<td>" + (errMsg.equals("") ? "== Parsed Physical Plan ==" : errMsg)是否为此处errMsg为NULL导致,为何写入数据后会报出异常?

在模拟构建数据库时

在进行大量数据插入测试时

数据太过于单调

自定义数据过于麻烦

读出来的数据没什么处理价值

推荐增加随机数功能,来进行大量数据插入模拟

目前某些业务场景是需要查询时序库的历史数据,但是客户往往只关注最近几天的数据,可是SQL却不支持时间的倒序查询,如关系库的order by time desc。

Describe the bug

When we batch insert some data, once an illegal sql occurs, all the sqls after that will not succeed.

To Reproduce

public class JDBCExample {

public static void main(String[] args) throws ClassNotFoundException, SQLException {

Class.forName("org.apache.iotdb.jdbc.IoTDBDriver");

try (Connection connection = DriverManager.getConnection("jdbc:iotdb://127.0.0.1:6667/", "root", "root");

Statement statement = connection.createStatement()) {

statement.execute("SET STORAGE GROUP TO root.sg1");

try {

for (int i = 1; i <= 5; i++) {

statement.addBatch("insert into root.sg1.d1(timestamp, s1) values("+ i + "," + 1 + ")");

statement.executeBatch();

statement.clearBatch();

}

statement.addBatch("insert into root.sg1.d1(timestamp, s 2) values("+ 0 + "," + 1 + ")");

statement.executeBatch();

statement.clearBatch();

for (int i = 1; i <= 5; i++) {

statement.addBatch("insert into root.sg1.d1(timestamp, s2) values("+ i + "," + 1 + ")");

statement.executeBatch();

statement.clearBatch();

}

} catch (BatchUpdateException e) {

e.printStackTrace();

}

ResultSet resultSet = statement.executeQuery("select * from root where time <= 10");

outputResult(resultSet);

resultSet = statement.executeQuery("select count(*) from root");

outputResult(resultSet);

// resultSet = statement.executeQuery("select count(*) from root where time >= 1 and time <= 100 group by ([0, 100], 20ms, 20ms)");

// outputResult(resultSet);

}

}

private static void outputResult(ResultSet resultSet) throws SQLException {

if (resultSet != null) {

System.out.println("--------------------------");

final ResultSetMetaData metaData = resultSet.getMetaData();

final int columnCount = metaData.getColumnCount();

for (int i = 0; i < columnCount; i++) {

System.out.print(metaData.getColumnLabel(i + 1) + " ");

}

System.out.println();

while (resultSet.next()) {

for (int i = 1; ; i++) {

System.out.print(resultSet.getString(i));

if (i < columnCount) {

System.out.print(", ");

} else {

System.out.println();

break;

}

}

}

System.out.println("--------------------------\n");

}

}

}

Desktop (please complete the following information):

使用maven编译server代码时失败

1、首先怀疑是我没有在CentOS7上安装thrift,于是去安装,然后编译还是出现同样的错误。

2、仔细查看报错信息“version `GLIBCXX_3.4.20' not found”,怀疑是gcc版本问题导致编译不过。

参考 https://www.bbsmax.com/A/6pdDOoOD5w/ 这篇博客的方法,从本机上复制 已有的libstdc++.so.6.0.20 到指定目录下,并重新软链接。

注意:CentOS7.8上没有libstdc++.so.6.0.20,但我在安装Anaconda时anaconda为我下载了libstdc++.so.6.0.25,我尝试将.25版本的复制过去,再用maven编译,编译通过。

The Problem

After compilation, double clicking the "start-server.bat" results in quitting unexpectedly.

Possible solution

ALTER USER root SET PASSWORD '123456';

修改密码报错;

ALTER USER testSET PASSWORD '123456';

其他用户就能修改成功,为啥子??

hive tsfile: After creating a table in Hive, query times error, IOTDB1.10.0

你好,非常荣幸看到你们的项目!

我了解到目前该数据库已经拥有了非常好的性能表现,我也非常想参与其中!但是我没有找到关于iotdb详细的解释,能够让我在打开一个具体类的时候,知道我在改动整个数据库里的哪一块,并且它的上下游都分别会影响什么!

能不能增加一个清晰的类似于导航地图一样的图或文档帮助我们理解!

谢谢

我的测试代码如下,连续执行两次,再执行select count(*) from root align by device,就会看到报错信息。

package iotdb;

import java.sql.*;

import java.text.SimpleDateFormat;

import java.util.ArrayList;

import java.util.Date;

import java.util.List;

public class PerformanceTest {

public static final String URL = "jdbc:iotdb://192.168.235.101:6667/";

public static int record = 10000;

public static int deviceNum = 30;

private static String startTime = "2020-01-01 00:00:00";

private static final int BATCH_NUM = 50;

public static final int INTERVAL = 15;

public static void createGroup() {

try (Connection connection = DriverManager.getConnection(URL, "root", "root");

Statement statement = connection.createStatement()) {

try {

statement.execute("SET STORAGE GROUP TO root.org1");

} catch (Throwable e) {

e.printStackTrace();

}

} catch (Throwable e) {

e.printStackTrace();

}

}

public static void insert(String startTime) {

long start = System.currentTimeMillis();

List<Thread> list = new ArrayList<>();

for (int i = 0; i < deviceNum; i++) {

int finalI = i;

Thread thread = new Thread(() -> {

try (Connection connection = DriverManager.getConnection(URL, "root", "root");

Statement statement = connection.createStatement()) {

TimeUtils timeUtils = new TimeUtils();

SimpleDateFormat sdf = new SimpleDateFormat("yyyy-MM-dd HH:mm:ss");

Date lastTime = sdf.parse(startTime);

for (int j = 0; j < record; j++) {

String sql = "insert into root.org1.flow.device" + finalI + "(timestamp, forward_flow, reverse_flow, instant_flow) values(" + sdf.format(lastTime) + "," + 100 + "," + 0 + "," + 50 + ")";

statement.addBatch(sql);

lastTime = timeUtils.addSecond(lastTime, INTERVAL);

if (record >= BATCH_NUM && j != 0 && j % BATCH_NUM == 0) {

statement.executeBatch();

statement.clearBatch();

System.out.println("batch insert");

}

}

statement.executeBatch();

statement.clearBatch();

System.out.println("final batch insert");

} catch (Throwable e) {

e.printStackTrace();

}

});

list.add(thread);

}

list.forEach(t -> {

t.start();

});

list.forEach(t -> {

try {

t.join();

} catch (InterruptedException e) {

e.printStackTrace();

}

});

long end = System.currentTimeMillis();

int total = deviceNum * record;

long ms = end - start;

System.out.println("insert finished.num:" + total + ",time:" + ms + ",rate:" + total / ms * 1000);

}

public static void main(String[] args) throws ClassNotFoundException, SQLException {

for (int i = 0; i < args.length; i++) {

String arg = args[i];

if ("-deviceNum".equalsIgnoreCase(arg)) {

deviceNum = Integer.parseInt(args[i + 1]);

} else if ("-record".equalsIgnoreCase(arg)) {

record = Integer.parseInt(args[i + 1]);

} else if ("-startTime".equalsIgnoreCase(arg)) {

startTime = args[i + 1];

} else {

continue;

}

}

Class.forName("org.apache.iotdb.jdbc.IoTDBDriver");

createGroup();

insert(startTime);

// query();

}

private static void query() {

try (Connection connection = DriverManager.getConnection(URL, "root", "root");

Statement statement = connection.createStatement()) {

ResultSet resultSet = statement.executeQuery("select count(*) from root.org1 align by device");

outputResult(resultSet);

} catch (Throwable e) {

e.printStackTrace();

}

}

private static void outputResult(ResultSet resultSet) throws SQLException {

if (resultSet != null) {

System.out.println("--------------------------");

final ResultSetMetaData metaData = resultSet.getMetaData();

final int columnCount = metaData.getColumnCount();

for (int i = 0; i < columnCount; i++) {

System.out.print(metaData.getColumnLabel(i + 1) + " ");

}

System.out.println();

while (resultSet.next()) {

for (int i = 1; ; i++) {

System.out.print(resultSet.getString(i));

if (i < columnCount) {

System.out.print(", ");

} else {

System.out.println();

break;

}

}

}

System.out.println("--------------------------\n");

}

}

}Welcome to register by company + homepage (optional) + use case(optional),

your support is important to us.

We will sync to official website periodically (TBD).

欢迎采用/正在测试IoTDB的公司在此登记,您的支持是我们最大的动力。

请按公司名 + 首页(可选) + 应用案例(可选)的格式登记。

后期我们会定期同步至使用公司列表页面。

你好请问,这个是因为什么原因导致的?

IoTDB> SELECT max_time(5452) FROM root.lin.l1.18_0 where time < 1590388748190 and time > 1590016639981 GROUP BY([1590016639981, 1590088748190), 1m)

Msg: 500: all cached chunks should be consumed first

0.10.0-SNAPSHOT版本

client工具先执行错误SQL再执行正确SQL后,返回的数据异常.

步骤如下:

使用JDBC Example代码向root.demo.s0到root.demo.s29,共30个时间序列,各写入1000000个数据.

使用client工具连接到server.

执行 select count(s0) from root.demo,结果为1000000,此结果正确.

连续输入并执行错误的SQL语句:

- select count(s0,s1) from root.demo

(Msg: Index 0 out of bounds for length 0)- select count(*) from root.demo.s0

(Msg: 401: line 1:15 missing ')' at ',')

执行 select count(s0) from root.demo,此时返回的结果为:Empty set.此结果错误.

执行 select count(s0) from root.demo,结果为1000000,结果又变为正确.

此现象是否存在异常?

如题,是什么原因总造成这个问题呢。用的0.9.2版本

This PR is for collecting the related articles about Apache IoTDB.

Slides and videos are also welcome.

We will publish on official media if suitable.

如果合适,我们将发布到官方媒体。

目前结果集是以注册先后排序的,如果结果集比较大,连续翻页也看不到哪些点最近被写过数据,之前莫名的以为是last 查询,仔细一想,应该是元数据查询能和热度信息关联并以降序排列就可以满足功能

15万条数据,分散在不同的设备上,

root.20200108.1812022495 -> root.20200108.[设备ID]

出现如下异常

2020-01-08 16:13:21,166 [pool-3-IoTDB-JDBC-Client-thread-2] DEBUG org.apache.iotdb.db.conf.adapter.IoTDBConfigDynamicAdapter:134 - memtableSizeInByte 73717977 is smaller than memTableSizeFloorThreshold 73736192

2020-01-08 16:13:21,167 [pool-3-IoTDB-JDBC-Client-thread-2] ERROR org.apache.iotdb.db.service.TSServiceImpl:1276 - IoTDB: error occurs when executing statements

org.apache.iotdb.db.exception.query.QueryProcessException: IoTDB system load is too large to add timeseries

at org.apache.iotdb.db.qp.executor.QueryProcessExecutor.insertBatch(QueryProcessExecutor.java:301)

at org.apache.iotdb.db.service.TSServiceImpl.insertBatch(TSServiceImpl.java:1257)

at org.apache.iotdb.service.rpc.thrift.TSIService$Processor$insertBatch.getResult(TSIService.java:1705)

at org.apache.iotdb.service.rpc.thrift.TSIService$Processor$insertBatch.getResult(TSIService.java:1690)

at org.apache.thrift.ProcessFunction.process(ProcessFunction.java:39)

at org.apache.thrift.TBaseProcessor.process(TBaseProcessor.java:39)

at org.apache.thrift.server.TThreadPoolServer$WorkerProcess.run(TThreadPoolServer.java:286)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:748)

2020-01-08 16:13:21,167 [pool-3-IoTDB-JDBC-Client-thread-2] DEBUG org.apache.iotdb.db.conf.adapter.IoTDBConfigDynamicAdapter:134 - memtableSizeInByte 73717977 is smaller than memTableSizeFloorThreshold 73736192

2020-01-08 16:13:21,168 [pool-3-IoTDB-JDBC-Client-thread-2] ERROR org.apache.iotdb.db.service.TSServiceImpl:1276 - IoTDB: error occurs when executing statements

一、我修改了root用户密码,并且重启了server

二、使用 python paho mqtt 插入数据时,没有设置用户名和密码相关参数

三、但是我仍然成功的插入了数据 -_-!!!

iotdb version: 0.10.0

paho mqtt: 1.5.0 (pip install paho-mqtt)

代码如下:

from time import *

import paho.mqtt.publish as publish

def timestamp():

return int(round(time() * 1000))

def single(tx_id: str, log_type: str, log_content: str, hostname: str = "192.168.3.181", port: int = 1883):

device_id = "root.log.%s" % tx_id

payload = "{\n" "\"device\":\"%s\",\n" "\"timestamp\":%d,\n" "\"measurements\":[\"type\",\"content\"],\n" "\"values" \

"\":[\"%s\",\"%s\"]\n" "}" % (device_id, timestamp(), log_type, log_content)

publish.single(topic=device_id, payload=payload, hostname=hostname, port=port)

if __name__ == '__main__':

type_list = ["INFO", "WARNING", "ERROR"]

content_list = ["自动上报: 组件不完整", "日志同步", "命令长度校验失败", "配置参数有误"]

begin_time = time()

for i in range(10):

single("1411111111", type_list[i % len(type_list)], content_list[i % len(content_list)])

end_time = time()

run_time = end_time - begin_time

print('该循环程序运行时间:', run_time)create timeseries root.turbine.d1.s1(temprature) with datatype=FLOAT, encoding=RLE, compression=SNAPPY tags(tag1=v1, tag2=v2) attributes(attr1=v1, attr2=v2)

目前支持在新建timeseries 的时候可以添加tag等标签信息,但一旦实施人员疏忽或需求变化,前期添加的tag信息就没法更新,除非删除timeseries重新建,这样涉及到已有数据是不能删除的,因此很麻烦,需要类似如下的功能:

alter timeseries root.turbine.d1.s1 change tag1 newV1

alter timeseries root.turbine.d1.s1 add tag3 v3

alter timeseries root.turbine.d1.s1 drop tag2

这样就可以方便的管理这些信息而不用删除数据

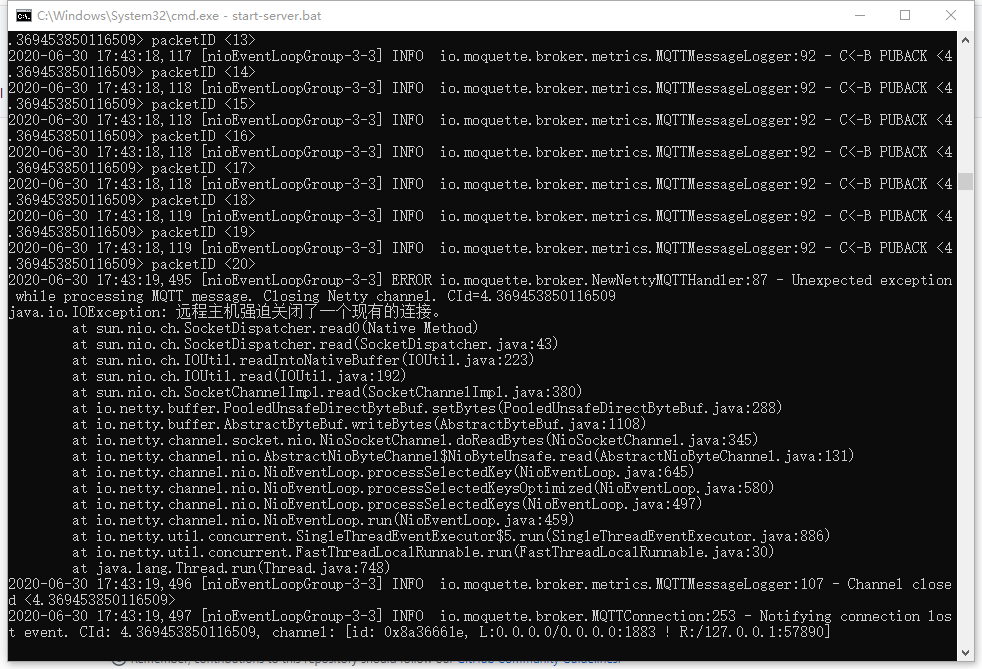

使用 python paho mqtt 插入数据时出现错误:

client_id不能为空,且qos必须等于0,不然就会出现错误。

qos=1 or 2, 则会出现如下错误,结果就是预期应该插入10W条数据的结果只插入了20条数据

iotdb version: 0.10.0

paho-mqtt: 1.5.0

代码如下

import random

import paho.mqtt.client as mqtt

import time

def on_connect(client, userdata, flags, rc):

print("Connected with result code: " + str(rc))

def on_message(client, userdata, msg):

print(msg.topic + " " + str(msg.payload))

client = mqtt.Client(client_id=str(random.uniform(1, 10)))

# client = mqtt.Client()

client.username_pw_set("root", "root")

client.connect('127.0.0.1', 1883)

timestamp = lambda: int(round(time.time() * 1000))

for i in range(100000):

message = "{\n" "\"device\":\"root.log.d1\",\n" "\"timestamp\":%d,\n" "\"measurements\":[\"s1\"],\n" "\"values" \

"\":[%f]\n" "}" % (timestamp() + 1000 * i, random.uniform(1, 10))

client.publish('root.log.d1.info', payload=message, qos=0, retain=False)

client.disconnect()Limit and Offset statement doesn't work in Session in 0.9.x version.

上面是我的定时测试程序

Connection timed out: connect这个问题昨天晚上到现在运行了十几个小时,出现了3次,但是不影响下次写入

客户端以及服务端版本是0.9.2

logs.zip

使用的是0.10版本。

在已有的timeries(root.ln.device1.s1)下再创建一个timeries,如root.ln.device1.s1.signal_value。在jdbc和cli下出现不同的结果。

1)JDBC下这么运行后 ,会造成服务卡死。打印的错误日志:

java.lang.ClassCastException: org.apache.iotdb.db.metadata.mnode.LeafMNode cannot be cast to org.apache.iotdb.db.metadata.mnode.InternalMNode

2)Cli下能成功执行,但是会将root.ln.device1.s1同级的timeries都屏蔽了,结果如下图:

IoTDB> show timeseries root.ln

+-------------+-----+-------------+--------+--------+-----------+

| timeseries|alias|storage group|dataType|encoding|compression|

+-------------+-----+-------------+--------+--------+-----------+

|root.ln.jgy.1| null| root.ln| FLOAT| GORILLA| SNAPPY|

|root.ln.jgy.2| null| root.ln| FLOAT| GORILLA| SNAPPY|

+-------------+-----+-------------+--------+--------+-----------+

Total line number = 2

It costs 0.022s

IoTDB> create timeseries root.ln.jgy.2.signal_value with datatype=FLOAT,encoding=GORILLA

IoTDB> show timeseries root.ln

+--------------------------+-----+-------------+--------+--------+-----------+

| timeseries|alias|storage group|dataType|encoding|compression|

+--------------------------+-----+-------------+--------+--------+-----------+

|root.ln.jgy.2.signal_value| null| root.ln| FLOAT| GORILLA| SNAPPY|

+--------------------------+-----+-------------+--------+--------+-----------+

这个是不是应该直接不允许在timeseries下再次创建timeseries

data count: 18999994 total

SQL: select * from root.position where time > 1559179819000 limit 10

执行上述sql:

shell client显示花费时间: 6.171S

IOTDB Metrics Server:0-7ms??????????

为什么两者差别如此之大?

我还尝试使用jdbc api,时间花费在5s,我以为查询会很强,没想到这么弱,感觉比不上HBase...

这是你们的产品介绍,我不知道是否是我的用法有问题,查询根本做不到毫秒级别,超过300W数据就已经是秒级别了,官网的文档也不详细,基本的性能调优我都没有找到。

求解惑,还有尽快完善文档吧,连集群模式都没有,生产谁敢用...

TsFile文件只能读和写吗?能基于已有的TsFile文件实现追加和修改吗?

其实这个功能有时候也是需要的,因为写数据过程中由于第三方系统的异常,导致写入了时间异常的数据,比如写入了未来时间的数据或者写入了过期时间的数据,后来被业务人员发现后需要删除异常数据,这样就需要根据某一闭时间区间范围删除数据。可是目前不支持啊。

定时写入数据的时候偶尔会出现这个问题,出现这个错误,执行下次定时写入程序就写不进去了

客户端,服务端版本0.9.2

logs.zip

Maximum memory allocation pool = 4096MB, initial memory allocation pool = 800MB

If you want to change this configuration, please check conf/iotdb-env.sh(Unix or OS X, if you use Windows, check conf/iotdb-env.bat).

2020-05-22 18:43:19,086 [main] INFO org.apache.iotdb.db.conf.IoTDBDescriptor:91 - Start to read config file ./../conf/iotdb-engine.properties

2020-05-22 18:43:19,089 [main] INFO org.apache.iotdb.db.conf.IoTDBDescriptor:111 - The stat_monitor_detect_freq_sec value is smaller than default, use default value

2020-05-22 18:43:19,090 [main] INFO org.apache.iotdb.db.conf.IoTDBDescriptor:118 - The stat_monitor_retain_interval_sec value is smaller than default, use default value

2020-05-22 18:43:19,090 [main] INFO org.apache.iotdb.db.conf.IoTDBDescriptor:228 - Time zone has been set to +08:00

2020-05-22 18:43:19,093 [main] INFO org.apache.iotdb.tsfile.common.conf.TSFileDescriptor:103 - Start to read config file ./../conf/iotdb-engine.properties

2020-05-22 18:43:19,095 [main] INFO org.apache.iotdb.db.conf.IoTDBConfigCheck:54 - System configuration is ok.

2020-05-22 18:43:19,097 [main] INFO org.apache.iotdb.db.service.StartupChecks:47 - JMX is enabled to receive remote connection on port 31999

2020-05-22 18:43:19,097 [main] INFO org.apache.iotdb.db.service.StartupChecks:57 - JDK veriosn is 8.

2020-05-22 18:43:19,098 [main] INFO org.apache.iotdb.db.service.IoTDB:80 - Setting up IoTDB...

2020-05-22 18:43:19,111 [main] ERROR org.apache.iotdb.db.concurrent.IoTDBDefaultThreadExceptionHandler:31 - Exception in thread main-1

java.lang.ArrayIndexOutOfBoundsException: 1

at org.apache.iotdb.db.metadata.MManager.operation(MManager.java:217)

at org.apache.iotdb.db.metadata.MManager.initFromLog(MManager.java:177)

at org.apache.iotdb.db.metadata.MManager.init(MManager.java:149)

at org.apache.iotdb.db.service.IoTDB.initMManager(IoTDB.java:121)

at org.apache.iotdb.db.service.IoTDB.setUp(IoTDB.java:92)

at org.apache.iotdb.db.service.IoTDB.active(IoTDB.java:69)

at org.apache.iotdb.db.service.IoTDB.main(IoTDB.java:55)

2020-05-22 18:43:19,114 [Thread-1] INFO org.apache.iotdb.db.service.IoTDBShutdownHook:32 - IoTDB exits. Jvm memory usage: 0 GB 40 MB 104 KB 256 B

我这里主要存zabbix监控信息,使用了一个存储组,每1分钟存2000条时序数据,我应该调整哪些内存参数?我现在使用1台4核16G机器。

我修改了iotdb-env.sh中的配置:

half_system_memory_in_mb=expr $system_memory_in_mb / 2

quarter_system_memory_in_mb=expr $half_system_memory_in_mb / 2

if [ "$half_system_memory_in_mb" -gt "1024" ]

then

half_system_memory_in_mb="4096"

fi

if [ "$quarter_system_memory_in_mb" -gt "8192" ]

then

quarter_system_memory_in_mb="8192"

fi

if [ "$half_system_memory_in_mb" -gt "$quarter_system_memory_in_mb" ]

then

max_heap_size_in_mb="$half_system_memory_in_mb"

else

max_heap_size_in_mb="$quarter_system_memory_in_mb"

fi

MAX_HEAP_SIZE="${max_heap_size_in_mb}M"

# Young gen: min(max_sensible_per_modern_cpu_core * num_cores, 1/4 * heap size)

max_sensible_yg_per_core_in_mb="200"

max_sensible_yg_in_mb=`expr $max_sensible_yg_per_core_in_mb "*" $system_cpu_cores`

desired_yg_in_mb=`expr $max_heap_size_in_mb / 4`

if [ "$desired_yg_in_mb" -gt "$max_sensible_yg_in_mb" ]

then

HEAP_NEWSIZE="${max_sensible_yg_in_mb}M"

else

HEAP_NEWSIZE="${desired_yg_in_mb}M"

fi

请问这个问题如何解决?

我的测试代码如下:

`

package iotdb;

import java.sql.*;

import java.text.ParseException;

import java.text.SimpleDateFormat;

import java.util.ArrayList;

import java.util.Date;

import java.util.List;

public class PerformanceTest2 {

public static final String URL = "jdbc:iotdb://192.168.235.101:6667/";

public static final int RECORD = 1000;

public static final int DEVICE_NUM = 10;

private static final int BATCH_NUM = 50;

public static final int INTERVAL = 15;

public static void createGroup() {

try (Connection connection = DriverManager.getConnection(URL, "root", "root");

Statement statement = connection.createStatement()) {

try {

statement.execute("SET STORAGE GROUP TO root.org1");

} catch (Throwable e) {

e.printStackTrace();

}

} catch (Throwable e) {

e.printStackTrace();

}

}

public static void insert(String startTime) {

List<Thread> list = new ArrayList<>();

try (Connection connection = DriverManager.getConnection(URL, "root", "root");

Statement statement = connection.createStatement()) {

for (int i = 0; i < DEVICE_NUM; i++) {

int finalI = i;

Thread thread = new Thread(() -> {

try {

TimeUtils timeUtils = new TimeUtils();

SimpleDateFormat sdf = new SimpleDateFormat("yyyy-MM-dd HH:mm:ss");

Date lastTime = sdf.parse(startTime);

for (int j = 0; j < RECORD; j++) {

String sql = "insert into root.org1.flow.device" + finalI + "(timestamp, forward_flow, reverse_flow, instant_flow) values(" + sdf.format(lastTime) + "," + 100 + "," + 0 + "," + 50 + ")";

statement.addBatch(sql);

lastTime = timeUtils.addSecond(lastTime, INTERVAL);

if (RECORD >= BATCH_NUM && j != 0 && j % BATCH_NUM == 0) {

statement.executeBatch();

statement.clearBatch();

System.out.println("batch insert");

}

}

statement.executeBatch();

statement.clearBatch();

System.out.println("final batch insert");

} catch (Throwable e) {

e.printStackTrace();

}

});

list.add(thread);

}

list.forEach(t -> {

t.start();

});

list.forEach(t -> {

try {

t.join();

} catch (InterruptedException e) {

e.printStackTrace();

}

});

System.out.println("insert finished.");

} catch (Throwable e) {

e.printStackTrace();

}

}

public static void main(String[] args) throws ClassNotFoundException, SQLException {

Class.forName("org.apache.iotdb.jdbc.IoTDBDriver");

createGroup();

insert("2020-01-01 00:00:00");

query();

}

private static void query() {

try (Connection connection = DriverManager.getConnection(URL, "root", "root");

Statement statement = connection.createStatement()) {

ResultSet resultSet = statement.executeQuery("select count(*) from root.org1");

outputResult(resultSet);

} catch (Throwable e) {

e.printStackTrace();

}

}

private static void outputResult(ResultSet resultSet) throws SQLException {

if (resultSet != null) {

System.out.println("--------------------------");

final ResultSetMetaData metaData = resultSet.getMetaData();

final int columnCount = metaData.getColumnCount();

for (int i = 0; i < columnCount; i++) {

System.out.print(metaData.getColumnLabel(i + 1) + " ");

}

System.out.println();

while (resultSet.next()) {

for (int i = 1; ; i++) {

System.out.print(resultSet.getString(i));

if (i < columnCount) {

System.out.print(", ");

} else {

System.out.println();

break;

}

}

}

System.out.println("--------------------------\n");

}

}

}

`

报错信息如下:

java.sql.SQLException: Error occurs when closing statement. at org.apache.iotdb.jdbc.IoTDBStatement.closeClientOperation(IoTDBStatement.java:150) at org.apache.iotdb.jdbc.IoTDBStatement.close(IoTDBStatement.java:160) at iotdb.PerformanceTest2.insert(PerformanceTest2.java:94) at iotdb.PerformanceTest2.main(PerformanceTest2.java:102) Suppressed: java.sql.SQLException: Error occurs when closing session at server. Maybe server is down. at org.apache.iotdb.jdbc.IoTDBConnection.close(IoTDBConnection.java:120) ... 2 more Caused by: org.apache.thrift.transport.TTransportException: Cannot write to null outputStream at org.apache.thrift.transport.TIOStreamTransport.write(TIOStreamTransport.java:140) at org.apache.thrift.protocol.TBinaryProtocol.writeI32(TBinaryProtocol.java:202) at org.apache.thrift.protocol.TBinaryProtocol.writeMessageBegin(TBinaryProtocol.java:117) at org.apache.thrift.TServiceClient.sendBase(TServiceClient.java:70) at org.apache.thrift.TServiceClient.sendBase(TServiceClient.java:62) at org.apache.iotdb.service.rpc.thrift.TSIService$Client.send_closeSession(TSIService.java:182) at org.apache.iotdb.service.rpc.thrift.TSIService$Client.closeSession(TSIService.java:174) at org.apache.iotdb.jdbc.IoTDBConnection.close(IoTDBConnection.java:118) ... 2 more Caused by: org.apache.thrift.transport.TTransportException: Cannot write to null outputStream at org.apache.thrift.transport.TIOStreamTransport.write(TIOStreamTransport.java:140) at org.apache.thrift.protocol.TBinaryProtocol.writeI32(TBinaryProtocol.java:202) at org.apache.thrift.protocol.TBinaryProtocol.writeMessageBegin(TBinaryProtocol.java:117) at org.apache.thrift.TServiceClient.sendBase(TServiceClient.java:70) at org.apache.thrift.TServiceClient.sendBase(TServiceClient.java:62) at org.apache.iotdb.service.rpc.thrift.TSIService$Client.send_closeOperation(TSIService.java:366) at org.apache.iotdb.service.rpc.thrift.TSIService$Client.closeOperation(TSIService.java:358) at org.apache.iotdb.jdbc.IoTDBStatement.closeClientOperation(IoTDBStatement.java:145) ... 3 more

问几个问题哈:

1、支持分页吗?

看sql文档似乎支持offset和limit,应该是可以支持分页吧

2、字符串的正则匹配或者模糊查询

看文档好像没有like这种语句,支持的话能给个例子吗

3、有没有读写benchmark的报告,想了解下具体的性能表现

When I write some program by java to develop a IOTDB application, I have to import all jar files from the jdbc folder,It is not clear which one is need by jdbc interface.

please combine the files to only one or remind me which files is must.

A declarative, efficient, and flexible JavaScript library for building user interfaces.

🖖 Vue.js is a progressive, incrementally-adoptable JavaScript framework for building UI on the web.

TypeScript is a superset of JavaScript that compiles to clean JavaScript output.

An Open Source Machine Learning Framework for Everyone

The Web framework for perfectionists with deadlines.

A PHP framework for web artisans

Bring data to life with SVG, Canvas and HTML. 📊📈🎉

JavaScript (JS) is a lightweight interpreted programming language with first-class functions.

Some thing interesting about web. New door for the world.

A server is a program made to process requests and deliver data to clients.

Machine learning is a way of modeling and interpreting data that allows a piece of software to respond intelligently.

Some thing interesting about visualization, use data art

Some thing interesting about game, make everyone happy.

We are working to build community through open source technology. NB: members must have two-factor auth.

Open source projects and samples from Microsoft.

Google ❤️ Open Source for everyone.

Alibaba Open Source for everyone

Data-Driven Documents codes.

China tencent open source team.