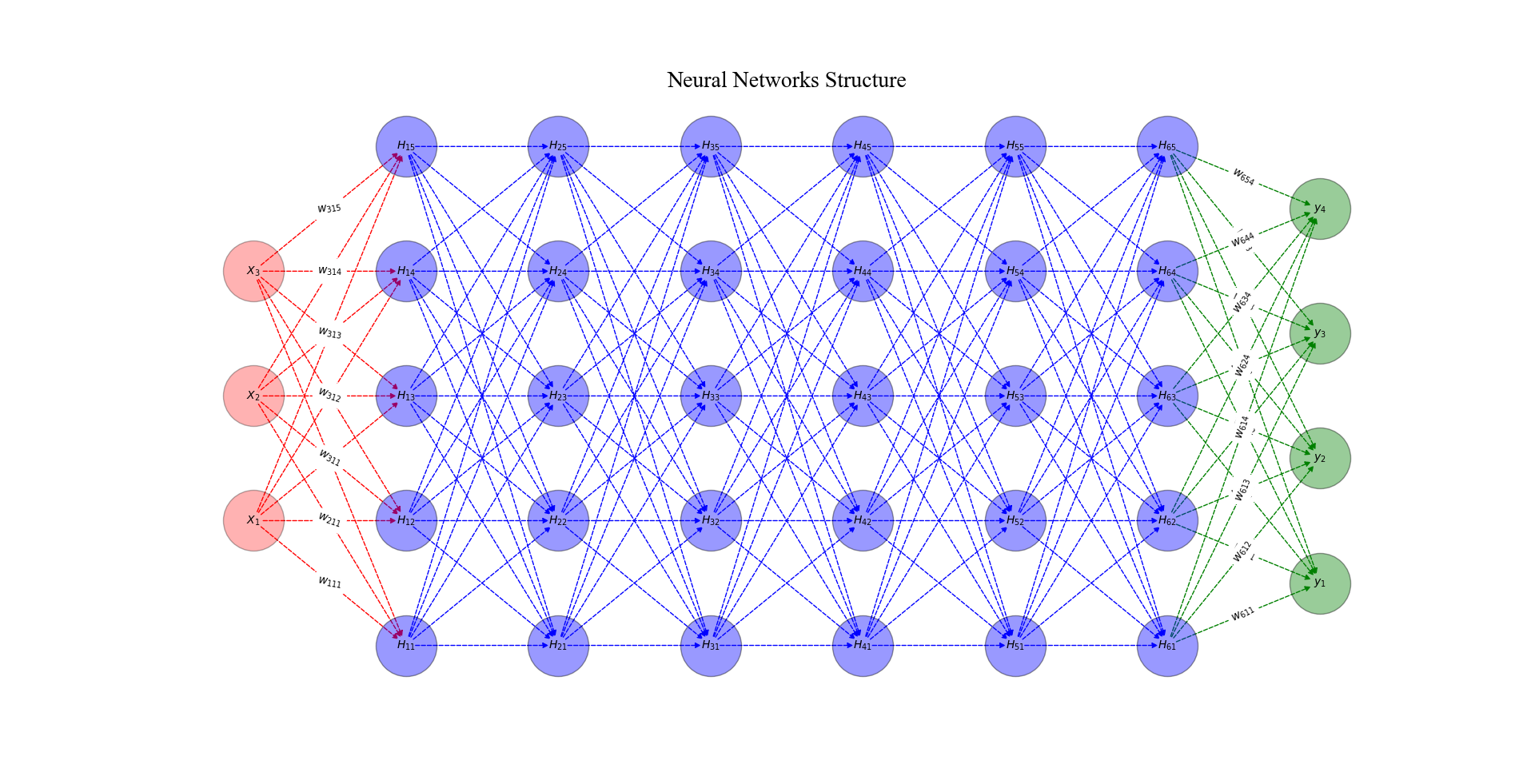

This repository contains a neural network regression model implemented from scratch. The main objective of this project is to provide a comprehensive understanding of the operational logic behind neural networks.

- Implementation of a feed forward neural network architecture.

- Configurable number of layers and neurons per layer.

- Support for various activation functions (e.g., sigmoid, ReLU).

- Flexible training options, including customizable learning rate and batch size.

- Efficient back propagation algorithm for weight updates.

- Evaluation of model performance with regression metrics.

- Save and load trained models for future use.

Contributions are welcome! If you find any bugs or have suggestions for improvement, please open an issue or submit a pull request.

This project is licensed under the MIT License.

Note: This is a simplified implementation for educational purposes. For more complex tasks and improved performance, it is recommended to use established deep learning frameworks such as TensorFlow or PyTorch.