hanatos / vkdt Goto Github PK

View Code? Open in Web Editor NEWraw photography workflow that sucks less

Home Page: https://vkdt.org

License: BSD 2-Clause "Simplified" License

raw photography workflow that sucks less

Home Page: https://vkdt.org

License: BSD 2-Clause "Simplified" License

Test case:

--> the deletion confirmation message is still there, as if it was on this 2nd file that I performed the click on 'delete images(s).

Expectation: the confirmation message disappears if the selection changes

Video of the issue

Thanks

GLLM

vkdt is more than promising on the development side but I miss some tagging features on lighttable.

My workflow is the following:

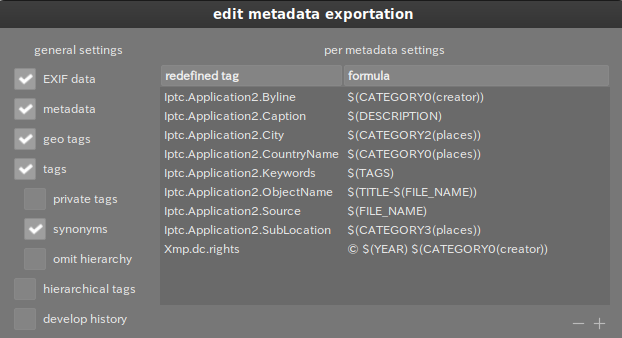

Here is an example of metadata export settings:

$(CATEGORYn(tag)) is the way to pick up the right tag.

Whenever I move my mouse or press a key, the preview moves to the right. It eventually drifts completely off the window and can only be recovered when the view randomly resets. Increasing the LOD reduces the distance that the preview moves per input event. Bisecting the commits reveals the issue started with ddc2379.

vkGetSwapchainImagesKHR(qvk.device, qvk.swap_chain, &qvk.num_swap_chain_images, NULL);

assert(qvk.num_swap_chain_images < QVK_MAX_SWAPCHAIN_IMAGES);

vkGetSwapchainImagesKHR(qvk.device, qvk.swap_chain, &qvk.num_swap_chain_images, qvk.swap_chain_images);

I was able to get it working changing < to <=. my actual values were 4 <= 4

I just had a crash. Here is the console output:

[gui] monitor [0] XWAYLAND0 at 0 0

[gui] vk extension required by GLFW:

[gui] VK_KHR_surface

[gui] VK_KHR_xcb_surface

[gui] no joysticks found

[gui] no display profile file display.XWAYLAND0, using sRGB!

[gui] no display profile file display.XWAYLAND0, using sRGB!

vkdt: Datei oder Verzeichnis nicht gefunden.

ptrace: Die Operation ist nicht erlaubt.

/opt/vkdt/6378: Datei oder Verzeichnis nicht gefunden.

Warning: 'set logging off', an alias for the command 'set logging enabled', is deprecated.

Use 'set logging enabled off'.

Warning: 'set logging on', an alias for the command 'set logging enabled', is deprecated.

Use 'set logging enabled on'.

vkdt: Datei oder Verzeichnis nicht gefunden.

[New LWP 6379]

[New LWP 6380]

[New LWP 6381]

[New LWP 6382]

[New LWP 6383]

[New LWP 6384]

[New LWP 6385]

[New LWP 6386]

[New LWP 6387]

[New LWP 6388]

[New LWP 6389]

[New LWP 6390]

[New LWP 6397]

[New LWP 6402]

[New LWP 6403]

[New LWP 6404]

[New LWP 6405]

[New LWP 6406]

[New LWP 6407]

[New LWP 6408]

[New LWP 6409]

[New LWP 6410]

[New LWP 6411]

[New LWP 6412]

[New LWP 6413]

[New LWP 6414]

[New LWP 6415]

[New LWP 6416]

[New LWP 6417]

[New LWP 6418]

[New LWP 6419]

[New LWP 6420]

[New LWP 6421]

[New LWP 6422]

[New LWP 6423]

[New LWP 6424]

This GDB supports auto-downloading debuginfo from the following URLs:

https://debuginfod.archlinux.org

Enable debuginfod for this session? (y or [n]) [answered N; input not from terminal]

Debuginfod has been disabled.

To make this setting permanent, add 'set debuginfod enabled off' to .gdbinit.

[Thread debugging using libthread_db enabled]

Using host libthread_db library "/usr/lib/libthread_db.so.1".

0x00007ff1ae1020bf in poll () from /usr/lib/libc.so.6

Warning: 'set logging off', an alias for the command 'set logging enabled', is deprecated.

Use 'set logging enabled off'.

Warning: 'set logging on', an alias for the command 'set logging enabled', is deprecated.

Use 'set logging enabled on'.

backtrace written to /tmp/vkdt-bt-6378.txt

recovery data written to /tmp/vkdt-crash-recovery.*

It might have been that the last opened folder (that is loaded on opening) does not exist anymore? (I'm on 6b4da4d, latest master as of now).

Hi @hanatos :)

Your paper fast temporal reprojection without motion vectors is very interesting and solve a real problem, DLSS, XeSS and FSR 2.0 all require motion vectors which most games don't provide.

The only mainstream upscaler without motion vectors is AMD FSR 1.0, which is not temporal. Yet FSR 1.0 has become a huge sucess and is e.g. supported by most video games emulators (Yuzu (switch), RPCS3 (PS3), etc). This enables us to improve the visual quality of low polygon, old games (AKA most games that mankind has produced) and even improve the quality of recent games (e.g. Zelda Breath of the wild).

As such AMD FSR has been revolutionnary (in practice, not necessarily for scholars).

Your algorithm is the only motion vector free competitor to FSR 1.0 to my knowledge and, being temporal based, has a theoretical advantage.

Hence your algorithm, if contextually superior to FSR 1.0 would be revolutionnary for gamers arround the world !

If you think it could be competitive then the next step enabling it to become quickly mainstream in use, would be to either:

Implement it via Reshade/sweetfx https://github.com/crosire/reshade, hence enabling it for any game out of the box

or by integrating it in a console emulator such as Yuzu or RPCS3. It would BTW enable easy comparison with FSR 1.0. It might be BTW (no idea) that FSR 1.0 would be complementary and could be combined to your algorithm ? (since FSR is computationally very cheap and spatially based).

If you do this and it works, it will become instantaneously a huge success :)

Capture works with attached diff.

This is a list of thoughts about robust, all-purpose and usefull way to control color, worth considering adding:

Note : [xxx] denote vectors processed element-wise

1. Fulcrum-based contrast

[RGB] = fulcrum * ( [RGB] / fulcrum )^ contrastY = fulcrum * ( Y / fulcrum )^contrast in xyY (converted back to RGB). The weight parameter is user-defined.2. Vibrance/saturation

[RGB] = Y + saturation * ( [RGB] - Y ), Y from XYZ space[RGB]= Y + saturation * ( [RGB] - Y )^vibrance3. ASC CDL

[RGB] = ( [slope] * [RGB]} + [offset] )^{power}, slope, offset and power being set for each channel{RGB} - Y and pretend these are RGB params (but this assumes the RGB params are bounded, which they are not)4. Colour zones

Not yet defined.

Something to allow sective hue/saturation/lightness shift over a specific hue/saturation/lightness range. The problem is the inputs need to be in a perceptually even space (IPT, JzAzBz, Lab), otherwise hue shifts will be steeper in blues and softer in reds. But these spaces will irremediably fuck evey attempt of blending/masking/feathering between the corrected range and the rest of the spectrum : color noise and halos.

One possible way would be to apply the transfer functions (for hue/saturation shifting) in IPT/JzAzBz/Lab, then convert the result to RGB, and blend the output with the input using a gaussian/guided/whatever filter centered on the range to edit, but in linearly scaled RGB.

Another option could be to upsample to spectral domain, for example 8 primaries, drive a factor (gain) for each primary, and see what happens.

Hello !

When opening vkdt without parameter (neither filepath nor folderpath), could we have vkdt either showing the open directory window (usually accessible via collect > open directory menu) so that we do not need ot open the collect module, then click on open directory each & every time we open vkdt ?

Many thanks,

GLLM

Maybe a good idea for version 1.0 :)

Hi Hanatos,

when zooming on my photos in DR, I always wonder how much I'm zoomed in : am I at 100% (which I usually want) or more (sometimes OK, sometimes not) ?

Do you think adding a zoom text indicator (xyz%) somewhere in DR could be feasible ?

And if this is too much UX/UI for the moment, dont hesitate to say so :-)

Many thanks !

GLLM

for instance

module:i-pfm:main

module:display:main

connect:i-pfm:main:output:display:main:input

has problems initialising. inserting a simple thing (exposure for instance) solves the issue.

HI,

I regularly use some module and play with several parameters, until my pictures looks like $h!t ! :-D

I'd love to be able to reset (i.e. re set a value to its default one) all the module parameters with a single click.

Here is a worthless mockup:

Button on the right hand side on the module header would reset all 3 parameters group at once

Thanks

GLLM

Steps to reproduce:

Preset "inpaint" should be located before "crop" in pipeline so that changing the crop will not move inpaint spots and so that the module can use regions outside the cropped region.

Hello @hanatos,

When using any slider to edit pictures, i always get the feeling that the effect (exposure, shadows, ... all of them) is applied very strongly even with a very tiny bit of wheeling/scrolling.

Is that on purpose ?

I understand effects may actually be applied linearly but I always overshoot PP and must everytime resort to play a second time with the mouse at pixel level to get the expected amount of effect.

Could effects be applied less linearly but more exponentially, so that the user may apply subtle amount of effect without struggle.

Note: I use vkdt on JPEG ;-) if this is of value

Thanks a lot,

GLLM

It'd be great if the last user action could be reverted (e.g. by pressing crtl+z)

Hello,

I think something should be done so that JPEG are properly rotated (according to EXIF bit) in lighttable & darkroom.

On my side, they appear un-rotated (in both modules), on pictures taken with

Thanks

GLLM

I have enabled debug, Freetype and exiv2 when building ie $ make debug -j8

This problem goes away if built without debug, ie$ touch qvk/qvk.c ; make -j8

Ubuntu 20.10, mesa 20.3.3 from PPA kisak APU 2400G with Vega56 GPU

A couple months ago you gave me a custom .zip with some features for waveform, CIE diag, and color picker (smaller font so all 6 rows of cc24 would fit on UI), I don't really remember but I just got it working with an X-rite CC24 again and I'm afraid the fixes will get lost.

Just making a ticket here to get the ball rolling.

Hi,

yet another feature request ! :-o

In darkroom, would it be possible to have the ability to quickly reset the stack of the image being processed ?

I can do it in lighttable, but when, in DR, I messed things up in my pipeline, I need to go back in LT, make sure I'm on the right image and then use the selected images > reset history stack.

A one click action from within DR would be neat, whether in the 'Tweak all' or in the 'Pipeline config' tabs !

Thanks

GLLM

Hello @hanatos,

I open this issue to keep track of my proposition, that I understand you do acknowledge to not apply RAW-dedicated modules: llap & filmcurv when opening JPEG files ... so that it is set out-of-the-box rather than needing the user modify the default-darkroom.i-jpg and default.i-jpg files.

The target is to get:

See: https://discuss.pixls.us/t/vkdt-dev-diary-pt2/28540/119?u=gllm

Thanks a lot,

GLLM

This is an differed answer on the IRC question

There are 3 main goals to dodging and burning:

Notice that the first goal is more easily covered by using an exposure compensation on areas defined by a guided filter mask (drawn or parametric), so this won't be covered here. Let's focus on the 2 others, that need actual painting.

Create a grey layer, blend it in softlight or overlay mode, paint in white over areas to dodge, and black over areas to burn. Dodging and burning tools in PS/Gimp work around the same idea, just abstracting the initial grey layer.

https://youtu.be/4qsLJArkAe4?t=65

Why it's good:

Why it's bad :

Create "lightness up" and "lightness down" (gamma-like) adjustment (tone) curves, and paint over their opacity masks to uncover the desired areas.

https://youtu.be/ZeEXY2kIpVo?t=686

Why it's good :

Why it's bad :

Same logic as before, but instead of tone curves, create an "exposure up" and "exposure down" layers, simply using a linear scaling of the scene-linear RGB code values. Then, paint over their opacity masks to uncover desired areas.

Why it's good :

You mainly need round soft brushes painting pure white and pure black with some opacity.

But… Photoshop has another really nice feature to its brushes : the flow.

https://youtu.be/-4qeu-TZLp8?t=200

The flow is basically simulating how wet ink would add up in a continuous stroke, but also helps blending seamlessly different strokes together, especially using hard brushes. See how it affects dodging and burning:

https://youtu.be/-4qeu-TZLp8?t=562

For implementations of this, maybe look at https://github.com/briend/libmypaint (Brien has been working on spectral color mixing for digital painting, I believe he has serious physically-accurate brushes in there).

Maybe this is close to what we want here:

https://github.com/briend/libmypaint/blob/d52e6bcd158fcb19cd62343289b7b1ba716be633/brushmodes.c#L291

Using vkdt to process a RAW file on current master (e758f1b) results in the following vulkan validation error:

[qvk] validation layer: Validation Error: [ VUID-VkComputePipelineCreateInfo-layout-00703 ] Object 0: handle = 0x22ff760000000f24, type = VK_OBJECT_TYPE_SHADER_MODULE; Object 1: handle = 0x4e1bb10000000f23, type = VK_OBJECT_TYPE_PIPELINE_LAYOUT; | MessageID = 0xe63c2d8b | Push constant is used in VK_SHADER_STAGE_COMPUTE_BIT of VkShaderModule 0x22ff760000000f24[]. But VkPipelineLayout 0x4e1bb10000000f23[] doesn't set VK_SHADER_STAGE_COMPUTE_BIT. The Vulkan spec states: layout must be consistent with the layout of the compute shader specified in stage (https://vulkan.lunarg.com/doc/view/1.2.198.0/linux/1.2-extensions/vkspec.html#VUID-VkComputePipelineCreateInfo-layout-00703)

vkdt: qvk/qvk.c:96: VkBool32 vk_debug_callback(VkDebugUtilsMessageSeverityFlagBitsEXT, VkDebugUtilsMessageTypeFlagsEXT, const VkDebugUtilsMessengerCallbackDataEXT *, void *): Assertion `0' failed.

processing JPGs does not show the same behaviour, so it must be an RAW-only operation which causes the issue.

associated gdb backtrace: vkdt-bt-236671.txt

vulkaninfo output: vulkaninfo.txt

I'm running ubuntu 20.04.3 with amdgpu 21.40.1.40501 (installed via amdgpu-install --opencl=rocr --vulkan=pro).

to reproduce:

In darkroom_enter(), changing char graph_cfg[PATH_MAX+100]; to char graph_cfg[PATH_MAX+100] = {0}; avoids hanging but the issue may be deeper.

dt_db_load_directory() should check if the image exists or/and when deleting the image, the corresponding symlinks should be removed.

Related somehow, deleting an image in tag collection doesn't delete the image (only the symlink).

EDIT: That's a reason to be able to get the tags per image...

Hello !

This was to be requested sooner or later ! :-D

Can we please have AVIF/HEIF format compatibility in vkdt ?

More and more cameras support it, no later than today was Fuji X-T5 announced, which supports it !

Samples if need be (cant attach them). The author made them publicly available (see descriptions)

https://www.flickr.com/photos/michael_dittrich/50939561216/

https://www.flickr.com/photos/michael_dittrich/50709286547/

Thanks in advance

GLLM

For example, I'm using v4l2 with a UVC webcam, which appears to be Rec.709. Showing a label for the current colourspace might be nice too.

I'm just checking out what vkdt does, tried to run it on my archlinux install after building it from source. It crashes with this error:

[gui] monitor [0] LGD 0x0540 at 0 0

[gui] monitor [1] DEL DELL U2515H at 1920 0

[gui] vk extension required by GLFW:

[gui] VK_KHR_surface

[gui] VK_KHR_wayland_surface

MESA-INTEL: warning: Performance support disabled, consider sysctl dev.i915.perf_stream_paranoid=0

vkdt: qvk/qvk.c:227: VkResult qvk_create_swapchain(): Assertion `qvk.num_swap_chain_images < QVK_MAX_SWAPCHAIN_IMAGES' failed.

[1] 144589 abort (core dumped) ./vkdt

The GPU is Intel HD graphics 630, this is the output from vulkaninfo: https://paste.sr.ht/~martijnbraam/69129017397a1c30779823a01a60a6bb41763a05

Is the computational graph (graph and nodes) general enough that

could be repurposed for say deep learning?

Or would it be better to start from scratch?

I'm trying to package vkdt for NixOS, which has nothing in the standard linux path.

When building, I get the following:

cmake flags:

-DCMAKE_FIND_USE_SYSTEM_PACKAGE_REGISTRY=OFF

-DCMAKE_FIND_USE_PACKAGE_REGISTRY=OFF

-DCMAKE_EXPORT_NO_PACKAGE_REGISTRY=ON

-DCMAKE_BUILD_TYPE=Release -DBUILD_TESTING=OFF

-DCMAKE_INSTALL_LOCALEDIR=/nix/store/cq585gb6wn8b2khl3jm3lbw0iinqpjk1-vkdt-8d03c97b128c2a848f9baf219269dee9eb010748/share/locale

-DCMAKE_INSTALL_LIBEXECDIR=/nix/store/cq585gb6wn8b2khl3jm3lbw0iinqpjk1-vkdt-8d03c97b128c2a848f9baf219269dee9eb010748/libexec

-DCMAKE_INSTALL_LIBDIR=/nix/store/cq585gb6wn8b2khl3jm3lbw0iinqpjk1-vkdt-8d03c97b128c2a848f9baf219269dee9eb010748/lib

-DCMAKE_INSTALL_DOCDIR=/nix/store/cq585gb6wn8b2khl3jm3lbw0iinqpjk1-vkdt-8d03c97b128c2a848f9baf219269dee9eb010748/share/doc/vkdt

-DCMAKE_INSTALL_INFODIR=/nix/store/cq585gb6wn8b2khl3jm3lbw0iinqpjk1-vkdt-8d03c97b128c2a848f9baf219269dee9eb010748/share/info

-DCMAKE_INSTALL_MANDIR=/nix/store/cq585gb6wn8b2khl3jm3lbw0iinqpjk1-vkdt-8d03c97b128c2a848f9baf219269dee9eb010748/share/man

-DCMAKE_INSTALL_OLDINCLUDEDIR=/nix/store/cq585gb6wn8b2khl3jm3lbw0iinqpjk1-vkdt-8d03c97b128c2a848f9baf219269dee9eb010748/include

-DCMAKE_INSTALL_INCLUDEDIR=/nix/store/cq585gb6wn8b2khl3jm3lbw0iinqpjk1-vkdt-8d03c97b128c2a848f9baf219269dee9eb010748/include

-DCMAKE_INSTALL_SBINDIR=/nix/store/cq585gb6wn8b2khl3jm3lbw0iinqpjk1-vkdt-8d03c97b128c2a848f9baf219269dee9eb010748/sbin

-DCMAKE_INSTALL_BINDIR=/nix/store/cq585gb6wn8b2khl3jm3lbw0iinqpjk1-vkdt-8d03c97b128c2a848f9baf219269dee9eb010748/bin

-DCMAKE_INSTALL_NAME_DIR=/nix/store/cq585gb6wn8b2khl3jm3lbw0iinqpjk1-vkdt-8d03c97b128c2a848f9baf219269dee9eb010748/lib

-DCMAKE_POLICY_DEFAULT_CMP0025=NEW

-DCMAKE_OSX_SYSROOT=

-DCMAKE_FIND_FRAMEWORK=LAST

-DCMAKE_STRIP=/nix/store/n95cd4q1dqzdvsiy1hmrkx9shwi3n4sh-gcc-wrapper-11.3.0/bin/strip

-DCMAKE_RANLIB=/nix/store/n95cd4q1dqzdvsiy1hmrkx9shwi3n4sh-gcc-wrapper-11.3.0/bin/ranlib

-DCMAKE_AR=/nix/store/n95cd4q1dqzdvsiy1hmrkx9shwi3n4sh-gcc-wrapper-11.3.0/bin/ar

-DCMAKE_C_COMPILER=gcc

-DCMAKE_CXX_COMPILER=g++

-DCMAKE_INSTALL_PREFIX=/nix/store/cq585gb6wn8b2khl3jm3lbw0iinqpjk1-vkdt-8d03c97b128c2a848f9baf219269dee9eb010748

CMake Warning:

Ignoring extra path from command line:

".."

CMake Error: The source directory "/build/source" does not appear to contain CMakeLists.txt.

So far, modules/nodes are assumed to have opaque alpha channel (== 1 for each pixel) but the pixel data is RGBalpha nonetheless. Alpha blending can happen using a blending node.

The limitations of this design are:

In the spirit of a full-fledged Porter-Duff alpha compositing with minimal pipeline assumptions and general use case, here is what I propose:

{ R * a * ma, G * a * ma, B * a * ma, a*ma }, meaning alpha <- alpha * mask alpha.transparent node output OVER opaque node input ? blending.h API and expose it full in-node. The blending node will be made of only this, other nodes will inherit it too but with background forced to node input and foreground force to node output. (as agreed on IRC)d, such that RGBa = { r * d, g * d, b * d, a } (useful to use multiply blending mode in place of overlay, to overlay a texture on a base image. The foreground texture could be adjusted such that "grey" hits 1, then it would act as a bumping map under the multiplication).e, such that RGBa = { r * e, g * e, d * e, a * e } (dissolve(F, 0.5) PLUS dissolve(B, 0.5) = physically-accurate double exposure).o such that RGBa = { r, g, b, MAX(a * o, 1) } (useful to force an opaque background)Tell me what you think.

[1] http://graphics.pixar.com/library/Compositing/paper.pdf

[2] http://graphics.pixar.com/library/DeepCompositing/paper.pdf

[3] https://blender.stackexchange.com/a/67371

the mouse-over on individual difference areas is hard to use while adjusting v4l2 camera settings such as white balance, saturation, etc., with another application, ie qv4l2, that requires mouse input.

Building master on ArchLinux fails with error:

gui/gui.c:181:25: error: use of undeclared identifier 'FLT_MAX'

vkdt.view_look_at_x = FLT_MAX;

^

gui/gui.c:182:25: error: use of undeclared identifier 'FLT_MAX'

vkdt.view_look_at_y = FLT_MAX;

^

Adding #include <float.h> in src/gui/gui.c allowed build to succeed.

Thumbnails are not loading. If I open a directory that I have not opened before, I just see a bunch of "fly" icons (or "bomb" icons in the latest version), but the thumbnails are not showing up. If I click on an image, it opens up fine in darkroom mode. After that, a thumbnail for this particular image will appear in lighttable mode too. If I open a directory that I opened in vkdt before, thumbnails show up fine.

I tried bisecting, and the first commit where the problem appears first seems to be 5a9ddaa

I tried running with -d all and in the console I see repeated messages like (this is with the latest commit 5739d25 )

[ERR] module dn2 has no connectors!

[gui] glfwGetVersionString() : 3.3.6 X11 GLX EGL OSMesa clock_gettime evdev shared

[gui] monitor [0] DisplayPort-0 at 0 0

[gui] vk extension required by GLFW:

[gui] VK_KHR_surface

[gui] VK_KHR_xcb_surface

[qvk] dev 0: vendorid 0x1002

[qvk] dev 0: AMD RADV RENOIR

[qvk] max number of allocations -1

[qvk] max image allocation size 16384 x 16384

[qvk] max uniform buffer range 4294967295

[qvk] dev 1: vendorid 0x10005

[qvk] dev 1: llvmpipe (LLVM 13.0.1, 256 bits)

[qvk] max number of allocations -1

[qvk] max image allocation size 16384 x 16384

[qvk] max uniform buffer range 65536

[ERR] device does not support requested feature shaderSampledImageArrayDynamicIndexing, trying anyways

[ERR] device does not support requested feature shaderStorageImageArrayDynamicIndexing, trying anyways

[ERR] device does not support requested feature inheritedQueries, trying anyways

[qvk] picked device 0 without ray tracing support

[qvk] num queue families: 2

[qvk] available surface formats:

[qvk] B8G8R8A8_SRGB

[qvk] B8G8R8A8_UNORM

[qvk] colour space: 0

[gui] no joysticks found

[gui] no display profile file display.DisplayPort-0, using sRGB!

[gui] no display profile file display.DisplayPort-0, using sRGB!

[qvk] available surface formats:

[qvk] B8G8R8A8_SRGB

[qvk] B8G8R8A8_UNORM

[qvk] colour space: 0

[db] allocating 1024.0 MB for thumbnails

[perf] upload source total: 0.014 ms

[perf] upload for raytrace: 0.229 ms

[mem] images : peak rss 0.00390625 MB vmsize 0.00390625 MB

[mem] staging: peak rss 0.000244141 MB vmsize 0.000244141 MB

[perf] record cmd buffer: 0.329 ms

[perf] i-bc1 main : 0.004 ms

[perf] total time: 0.004 ms

[perf] [thm] ran graph in 0ms

[perf] upload source total: 0.011 ms

[perf] upload for raytrace: 0.007 ms

[mem] images : peak rss 0.00390625 MB vmsize 0.00390625 MB

[mem] staging: peak rss 0.000244141 MB vmsize 0.000244141 MB

[perf] record cmd buffer: 0.051 ms

[perf] i-bc1 main : 0.003 ms

[perf] total time: 0.003 ms

[perf] [thm] ran graph in 0ms

[ERR] could not open directory '(null)'!

[ERR] [thm] no images in list!

[qvk] available surface formats:

[qvk] B8G8R8A8_SRGB

[qvk] B8G8R8A8_UNORM

[qvk] colour space: 0

[perf] upload source total: 0.053 ms

[perf] upload for raytrace: 0.024 ms

[mem] images : peak rss 0.00390625 MB vmsize 0.00390625 MB

[mem] staging: peak rss 0.000244141 MB vmsize 0.000244141 MB

[perf] record cmd buffer: 0.180 ms

[perf] i-bc1 main : 0.015 ms

[perf] total time: 0.015 ms

[perf] [thm] ran graph in 0ms

[perf] upload source total: 0.046 ms

[perf] upload for raytrace: 0.015 ms

[mem] images : peak rss 0.00390625 MB vmsize 0.00390625 MB

[mem] staging: peak rss 0.000244141 MB vmsize 0.000244141 MB

[perf] record cmd buffer: 0.136 ms

[perf] i-bc1 main : 0.013 ms

[perf] total time: 0.013 ms

[perf] [thm] ran graph in 0ms

and then repeating similar messages.

Feel free to shoo me away for trying alpha software, but...

./vkdt /whatever/path/to/photos

[pipe] [global init] cannot open modules directory!

[gui] monitor [0] eDP-1 at 0 0

[gui] vk extension required by GLFW:

[gui] VK_KHR_surface

[gui] VK_KHR_xcb_surface

INTEL-MESA: warning: Performance support disabled, consider sysctl dev.i915.perf_stream_paranoid=0

[gui] no display profile file display.eDP-1, using sRGB!

[gui] no display profile file display.eDP-1, using sRGB!

vkdt: ../ext/imgui/imgui_draw.cpp:1909: ImFont* ImFontAtlas::AddFontFromFileTTF(const char*, float, const ImFontConfig*, const ImWchar*): Assertion `(0) && "Could not load font file!"' failed.

fish: './vkdt' terminated by signal SIGABRT (Abort)

I'm on commit ffd4654 here.

the following lists and prioritises a few key features that need work to make vkdt useful for production use.

this is currently work in progress and certainly not in any good order yet or complete. shout if you see changes needed.

ab module to compare two history stateslegacy_params as in dt or for versioned params and main.comp)grade modulewavelet modulezones moduledt_draw postscript interpreter)ca moduleeq wavelet with manual edge weight, threshold, and scale curves)steps to reproduce:

observed behaviour:

expected behaviour:

here:

blue.lut.gz

extract to vkdt/bin/data/blue.lut to make examples/quake.cfg work.

Hi,

I often want to retouch several photos in a row, and switch from one to another when doing so.

Thus, I'd liek to be able in darkroom to move to the next or previous image of the folder.

Is this something you could consider ?

Thanks a lot

GLLM

reminder for myself, see this comment: https://discuss.pixls.us/t/vkdt-devel-diary/19505/372

may be something with blur radii not scaling correctly with image size (though that shouldn't affect export)

When I open an image, I can zoom in and out with the mouse wheel. But once I move the mouse even one pixel, zoom resets back to the initial size.

Ubuntu 21.04, Xorg session, GCC 10.3.0, GLFW 3.3.2-1

imgui seems to set an error callback, but it wasn't helping to debug on AMD/wayland, perhaps too early in init.

verr.txt

glfw version string when logging is verbose

Steps to reproduce:

Expected behavior:

Observed behavior:

Hi,

since a few commit, in lighttable, when selecting a picture (via simple click), the highlight done around the thumbnails double flashes in a weird fashion ... as if the thumbnail was very quickly selected+unselected+selected again.

Not sure this is expected

Thanks

GLLM

As explained on pixls.us, I already did a package of vkdt for fedora, and I'm now trying to create a flatpak one.

I open this issue to centralise a bit all issues I found along the way. Here is a simple list:

.desktop file and or flatpak, ... useful to have unique app id.desktop file with probably an iconI'm currently trying to get a clang compiler working with LLVM to b able to compile the project.

Even if I know it can be a burden, I don't think we can avoid local edits and masking. If only for one reason, it would be because most softs don't blend in linear space as they should and don't make correct use of associated alpha blending.

Use cases involving local edits can be :

The way current dt is doing it is problematic though, because drawn masks are blended from the input/output of the current module, and the parametric blending depends on what is done earlier in the pipe. Set up a luminance parametric mask for color edits, change the exposure earlier, and your mask is lost. Also, masks need to be defined for each filter, possibly duplicated (even if the last raster mask option is nice).

Some filters make sense at a general level (colour input/output transforms, camera exposure compensation, denoising, LUTs, reconstructive deblurring), some make sense at a local level (healing/cloning/in-painting), some make sense at both (lumiance/colour adjustments, blurring/local contrast). For this reason, having reorderable and multi-instanciable filters is a necessity.

So, I think it should be better to have a first round of global edits on the raw file, for operations that make sense at the scene level (relighting, spectrum modification), at the camera level (white balance, exposure compensation, demosaic, colour profile, lens profile), and the signal processing/reconstruction (healing, inpainting). At this step, I think the use of masking/blending equals tempering bad filters that break, so it should not happen ideally.

Then, a mask manager would split the global image into zones, defined by drawn and/or parametric masks or by auto/assisted image segmentation. Each zone would be loaded with the required filters, upon request, and possibly processed in parallel.

After that, the zones are blended with a proper associated alpha, and go to the final step of global edits (HDR/filmic curves, gamut mapping, output colour profile, film emulation LUTs, possibly brushed dodging & burning, etc.).

In this architecture, parallel zones are loaded with filters stacked in serie, in parallel, so we limit the dependency of masking parameters to the initial step of global edits, and we don't need to blend after every filter. I don't think parametric blending will be necessary at all for these filters (inside zones), if algos behave properly (just a regular opacity, maybe some blendings like multiply or average).

I don't believe in several pipes (preview/full-size) because some operations are not scale-invariant and the branching it involves makes a lot of trouble to maintain, regarding scaling of masks coordinates and such. I would just use 2 intermediate full-size raster caches, to store the image state before and after the zones splitting, and maybe a third right after the demosaicing and denoising step, because these can be heavy. Or maybe just abuse the RAM and cache every filter's output as long as there is still space (8 GB = 20 copies of a 24 Mpx image in RGBa single-precision float).

A declarative, efficient, and flexible JavaScript library for building user interfaces.

🖖 Vue.js is a progressive, incrementally-adoptable JavaScript framework for building UI on the web.

TypeScript is a superset of JavaScript that compiles to clean JavaScript output.

An Open Source Machine Learning Framework for Everyone

The Web framework for perfectionists with deadlines.

A PHP framework for web artisans

Bring data to life with SVG, Canvas and HTML. 📊📈🎉

JavaScript (JS) is a lightweight interpreted programming language with first-class functions.

Some thing interesting about web. New door for the world.

A server is a program made to process requests and deliver data to clients.

Machine learning is a way of modeling and interpreting data that allows a piece of software to respond intelligently.

Some thing interesting about visualization, use data art

Some thing interesting about game, make everyone happy.

We are working to build community through open source technology. NB: members must have two-factor auth.

Open source projects and samples from Microsoft.

Google ❤️ Open Source for everyone.

Alibaba Open Source for everyone

Data-Driven Documents codes.

China tencent open source team.