What is ml-camera?

How does this app work?

Requirements

This is a demo flask app where you can take a picture in a mobile browser and send the pic to Google's ML Vision API for label and text extraction.

At a high level, this app does the following:

- Asks the user for access to their phone's camera (Front or Back)

- Once the user grants access, video starts streaming.

- The user can choose whether they want to detect what an image is or extract text from an image by clicking on the appropriate link.

- The image is then sent server side along with the image service to be used (text extraction or label).

- After sending the image to the Vision API, it returns either a label or text that was extracted from the image and then sends this information back to the client.

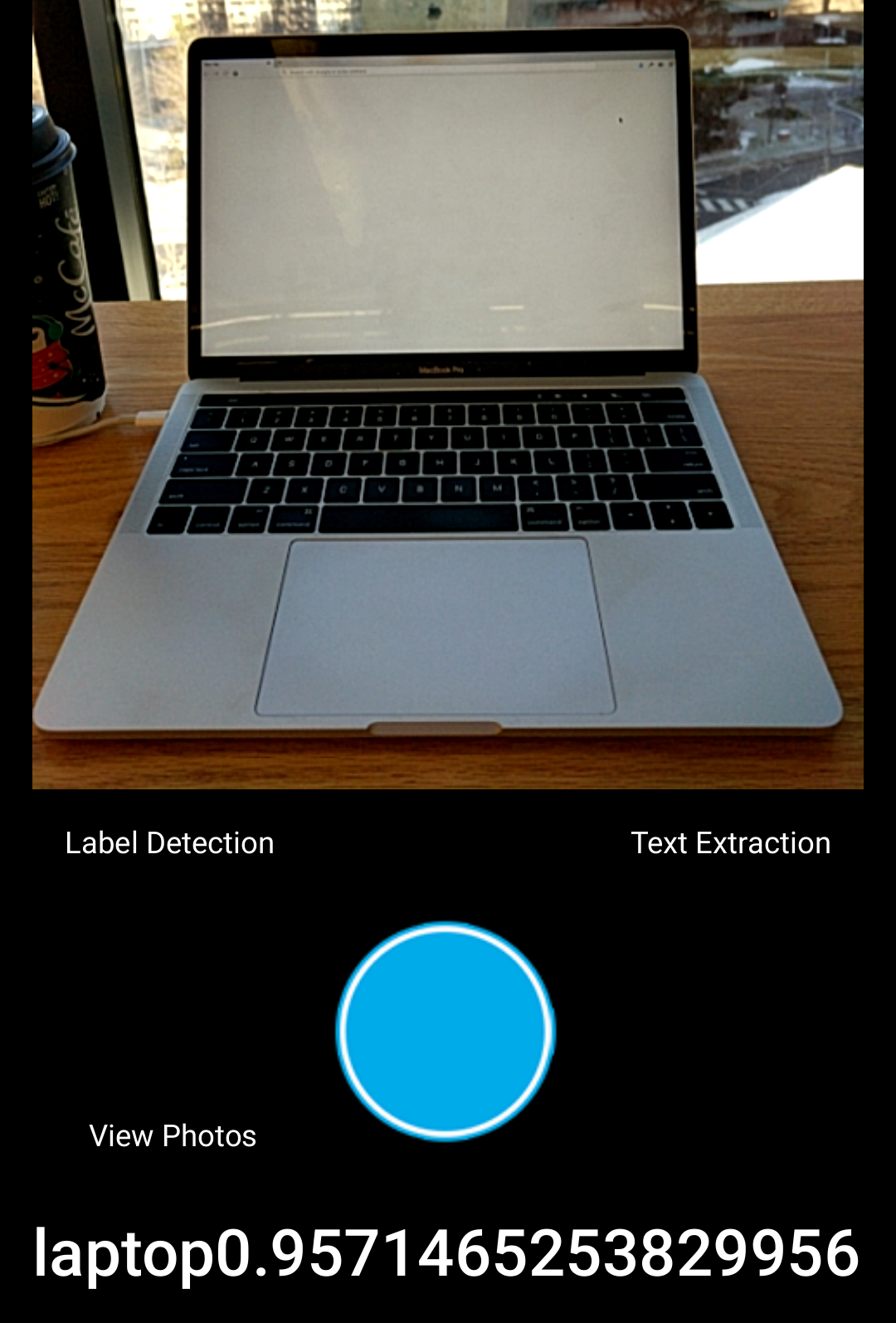

Example of the Google ML Vision API successfully labeling a laptop. In addition to the label, a confidence score is also returned. In the case below, Google is 95% confident that the image is a laptop.

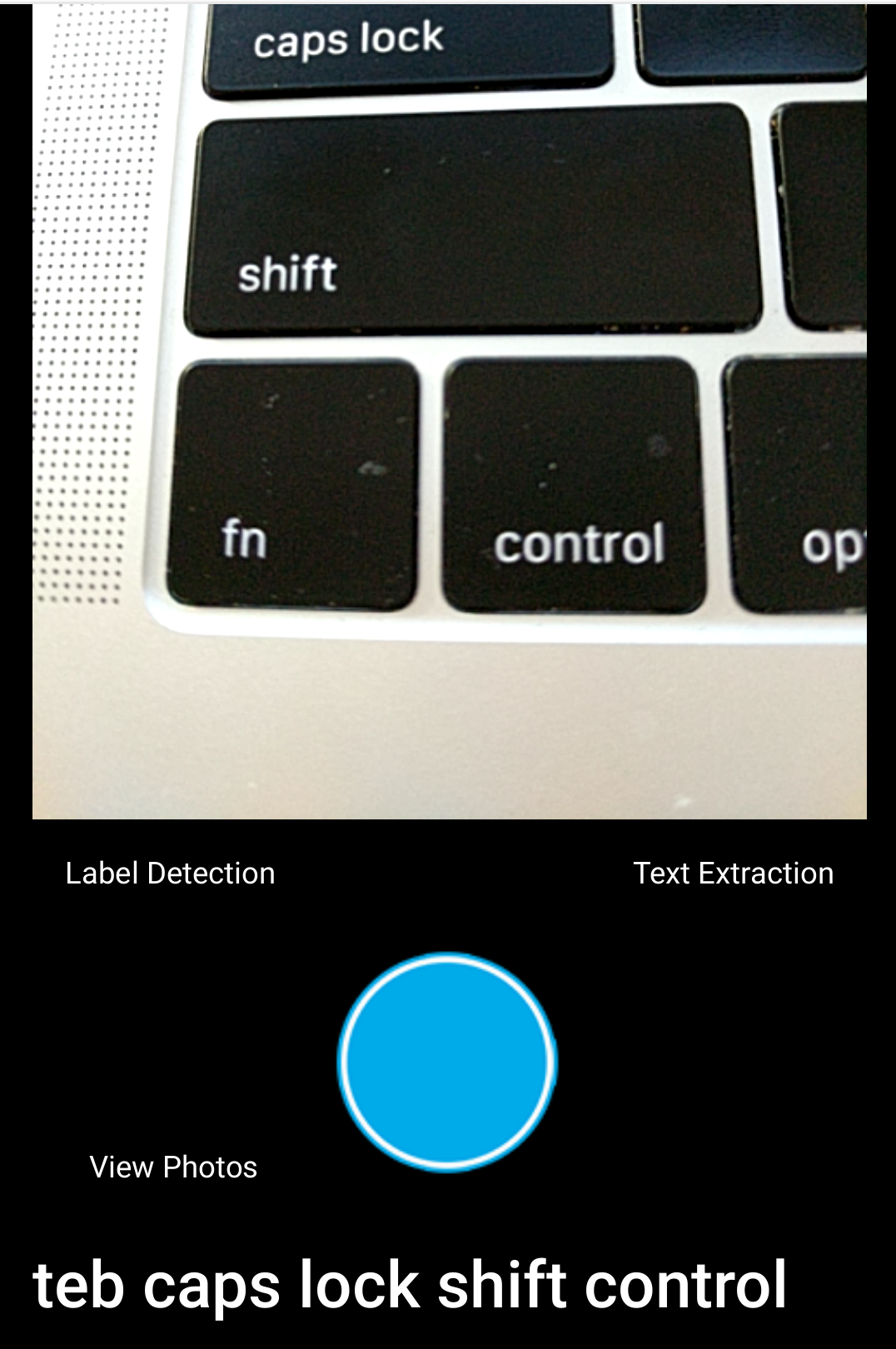

Example of the Google ML Vision API extracting text off of a keyboard.

This app uses the following python libraries, which you will need to install:

- numpy

- google.cloud.vision

- io

- datetime

- flask

- flask_socketio

- requests

On the client side:

- jquery

Essentially, main functionality of the app is contained within the following files:

- app.py - this is the main script

- vision.py - this is a helper script which sends the image to either the label detection or text extraction service.

- /static/js/camera.js - this is the main javascript file which sends the data to python and renders results from the Vision API.

Here are some links that I found very useful with code examples.

- Flask: http://flask.pocoo.org/

- SocketIO: https://flask-socketio.readthedocs.io/en/latest/

- Google Cloud Vision API: https://cloud.google.com/vision/

- How to build a camera: https://developer.mozilla.org/en-US/docs/Archive/B2G_OS/API/Camera_API/Introduction