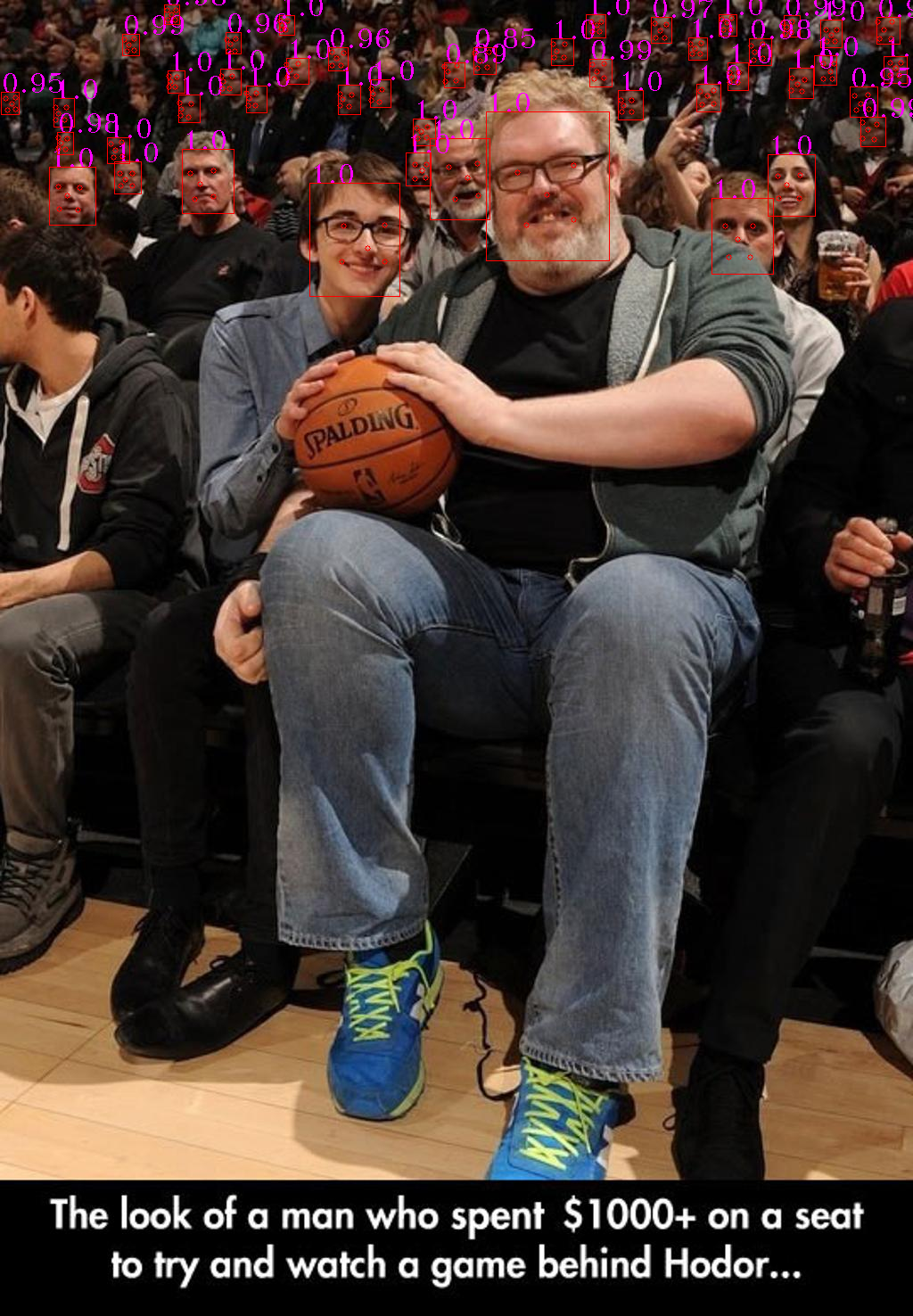

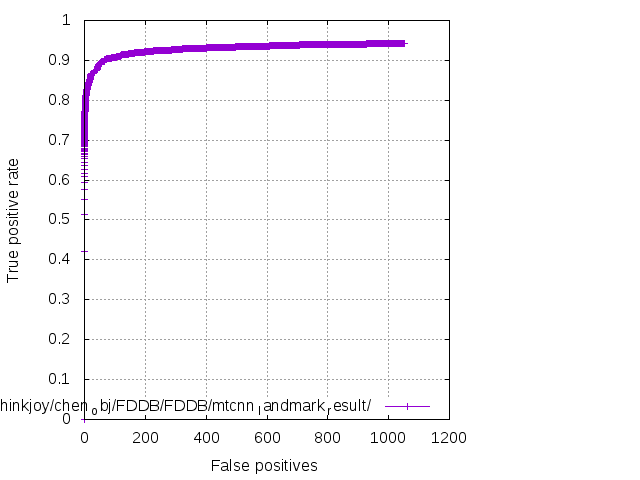

This work is used for reproduce MTCNN, a Joint Face Detection and Alignment using Multi-task Cascaded Convolutional Networks.

- You need CUDA-compatible GPUs to train the model.

- Tensorflow 1.2.1

- Python 2.7

- Ubuntu 16.04

- Cuda 8.0

- Download the WIDER Face Dataset. Unzip it and put the

WIDER_trainfolder in theprepare_datafolder. - Download the Landmark Dataset. Unzip it and put both the

lfw_5590andnet_7876into theprepare_datafolder. - Run

gen_12net_data.pyto generate training data(Face Detection Part) for PNet. - Run

gen_landmark_data.py lfwnet PNetto generate training data(Face Landmark Detection Part) for PNet. - Run

gen_imglist.py PNetto merge two parts of training data. - Run

gen_tfrecords.py PNetto generate tfrecord for PNet. - In

train_models/, runtrain_net.py PNetto train PNet. - After training PNet, run

gen_hard_example.py --test_mode PNetto generate training data(Face Detection Part) for RNet. - Run

gen_landmark_data.py lfwnet RNetto generate training data(Face Landmark Detection Part) for RNet. - Run

gen_imglist.py PNetto merge two parts of training data. - Run

gen_tfrecords.py RNetto generate tfrecords for RNet. - In

train_models/, runtrain_net.py RNetto train RNet. - After training RNet, run

gen_hard_example.py --test_mode RNetto generate training data(Face Detection Part) for ONet. - Run

gen_landmark_data.py lfwnet ONetto generate training data(Face Landmark Detection Part) for ONet. - Run

gen_imglist.py ONetto merge two parts of training data. - Run

gen_tfrecords.py ONetto generate tfrecords for ONet. - In

train_models/, runtrain_net.py ONetto train ONet.

-

When training PNet,I merge four parts of data(pos,part,landmark,neg) into one tfrecord,since their total number radio is almost 1:1:1:3.But when training RNet and ONet,I generate four tfrecords,since their total number is not balanced.During training,I read 64 samples from pos,part and landmark tfrecord and read 192 samples from neg tfrecord to construct mini-batch.

-

It's important for PNet and RNet to keep high recall radio.When using well-trained PNet to generate training data for RNet,I can get 14w+ pos samples.When using well-trained RNet to generate training data for ONet,I can get 19w+ pos samples.

-

Since MTCNN is a Multi-task Network,we should pay attention to the format of training data.The format is:

[path to image][cls_label][bbox_label][landmark_label]

For pos sample,cls_label=1,bbox_label(calculate),landmark_label=[0,0,0,0,0,0,0,0,0,0].

For part sample,cls_label=-1,bbox_label(calculate),landmark_label=[0,0,0,0,0,0,0,0,0,0].

For landmark sample,cls_label=-2,bbox_label=[0,0,0,0],landmark_label(calculate).

For neg sample,cls_label=0,bbox_label=[0,0,0,0],landmark_label=[0,0,0,0,0,0,0,0,0,0].

-

Since the training data for landmark is less.I use transform,random rotate and random flip to conduct data augment(the result of landmark detection is not that good).

MIT LICENSE

- Kaipeng Zhang, Zhanpeng Zhang, Zhifeng Li, Yu Qiao , " Joint Face Detection and Alignment using Multi-task Cascaded Convolutional Networks," IEEE Signal Processing Letter

- MTCNN-MXNET

- MTCNN-CAFFE

- deep-landmark