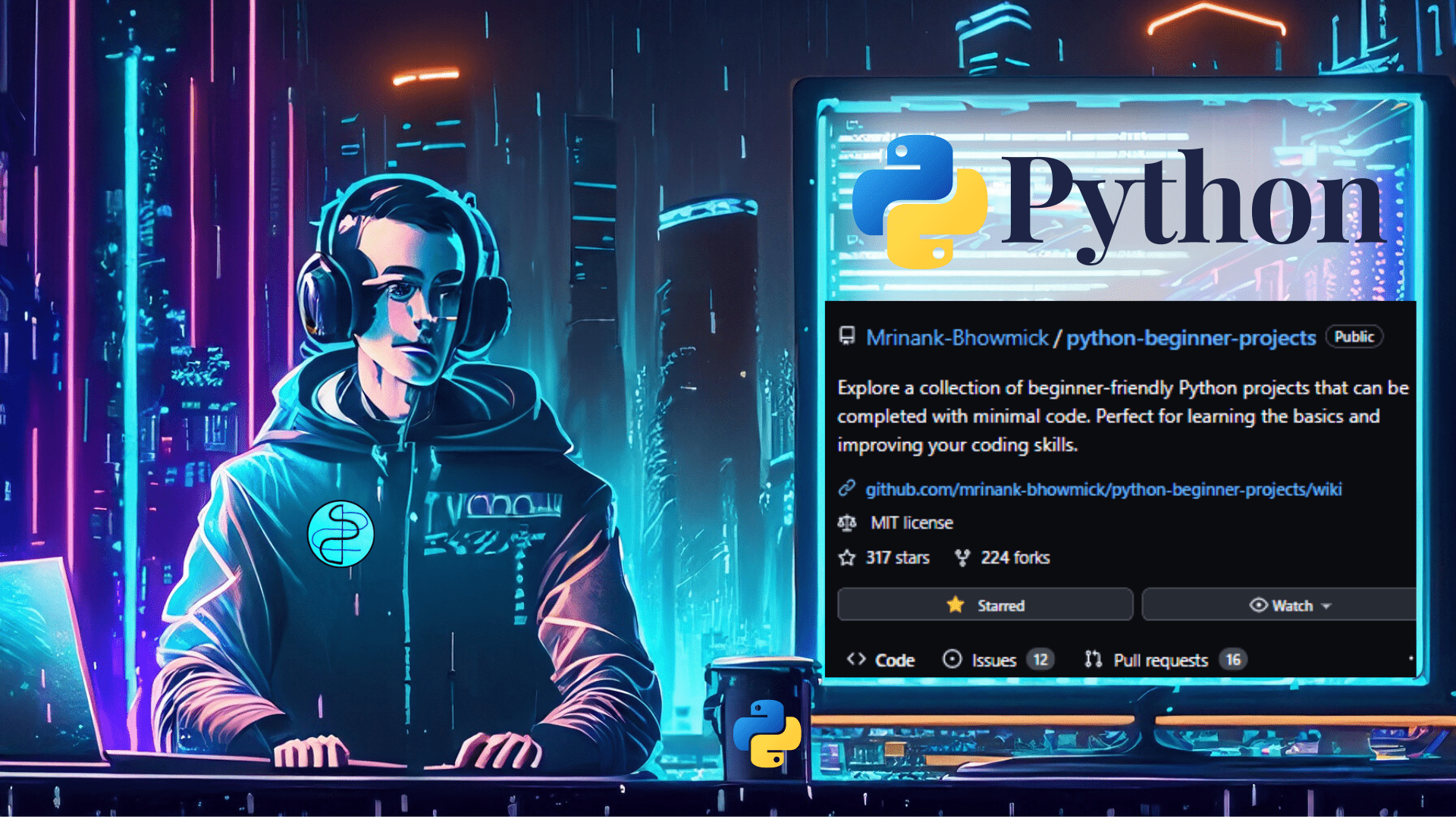

💠 This repository offers a variety of fascinating mini-projects written in Python.

💠 Working on Python projects will undoubtedly improve your skills and raise your profile in preparation for the globalised marketplace outside.

💠 Projects are a potential method to begin your career in this area.

💠 This language deserves a lot of attention in today's world, and why not since it can address so many real-world problems?