Pocket-sized implementations of machine learning models.

$ git clone https://github.com/eriklindernoren/NapkinML

$ cd NapkinML

$ sudo python setup.py install

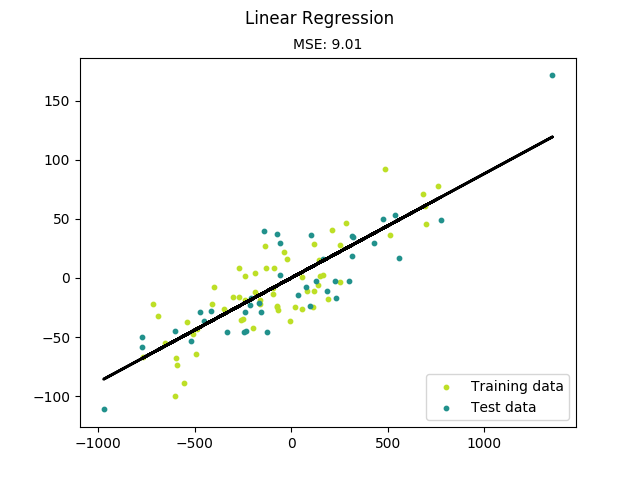

class LinearRegression():

def fit(self, X, y):

self.w = np.linalg.inv(X.T.dot(X)).dot(X.T).dot(y)

def predict(self, X):

return X.dot(self.w)$ python napkin_ml/examples/linear_regression.py

Figure: Linear Regression.

class LDA():

def fit(self, X, y):

cov_sum = sum([np.cov(X[y == val], rowvar=False) for val in [0, 1]])

mean_diff = X[y == 0].mean(0) - X[y == 1].mean(0)

self.w = np.linalg.inv(cov_sum).dot(mean_diff)

def predict(self, X):

return 1 * (X.dot(self.w) < 0)$ python napkin_ml/examples/lda.py

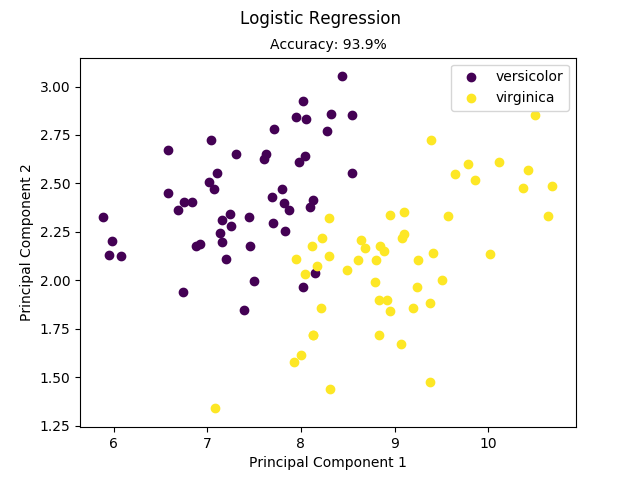

class LogisticRegression():

def fit(self, X, y, n_iter=4000, lr=0.01):

self.w = np.random.rand(X.shape[1])

for _ in range(n_iter):

self.w -= lr * -(y - sigmoid(X.dot(self.w))).dot(X)

def predict(self, X):

return np.rint(sigmoid(X.dot(self.w)))$ python napkin_ml/examples/logistic_regression.py

Figure: Classification with Logistic Regression.

class KNN():

def predict(self, k, Xt, X, y):

y_pred = np.empty(len(Xt))

for i, xt in enumerate(Xt):

idx = np.argsort([np.linalg.norm(x-xt) for x in X])[:k]

y_pred[i] = np.bincount(y[idx]).argmax()

return y_pred$ python napkin_ml/examples/knn.py

Figure: Classification of the Iris dataset with K-Nearest Neighbors.

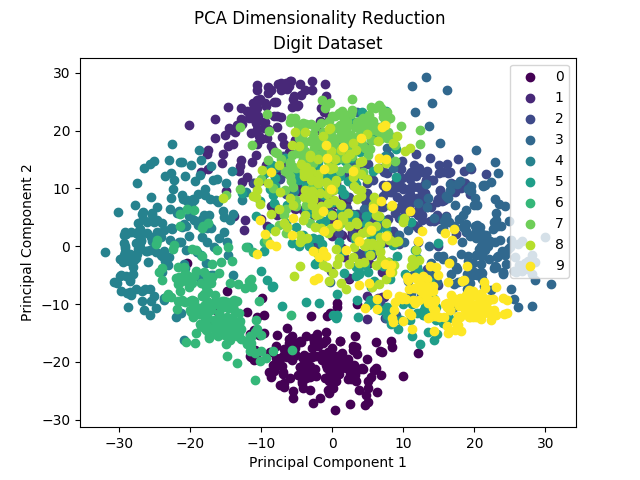

class PCA():

def transform(self, X, dim):

e_val, e_vec = np.linalg.eig(np.cov(X, rowvar=False))

idx = e_val.argsort()[::-1]

e_vec = e_vec[:, idx][:, :dim]

return X.dot(e_vec)$ python napkin_ml/examples/pca.py

Figure: Dimensionality reduction with Principal Component Analysis.